Last Updated: April 18, 2026

A new research paper from Nanyang Technological University proposes a fundamentally different way to build AI agent systems — one where agents don't just execute tasks, but safely rewrite their own instructions, tools, and behaviour over time. Called the Autogenesis Protocol, it addresses the biggest unsolved problem in multi-agent AI: how do you let agents improve themselves without breaking everything?

Published as an ICML 2026 submission by researcher Wentao Zhang, the paper introduces a two-layer protocol architecture that cleanly separates what can change from how change happens. It's a compelling read for anyone building production agent systems, and it has direct implications for frameworks like OpenClaw, AutoGen, and CrewAI.

What Problem Does Autogenesis Solve?

The core issue Autogenesis tackles is that current AI agent protocols are built for connectivity, not evolution. Anthropic's Model Context Protocol (MCP) standardises how models call tools. Google's Agent-to-Agent (A2A) protocol standardises how agents communicate with each other. But neither protocol addresses what happens when an agent needs to modify its own components — its prompts, its tools, its memory — during or between task executions.

This matters because real-world agent deployments are messy. A customer service agent might discover that its prompt causes it to hallucinate refund policies. A coding agent might find that its tool for reading files times out on large repositories. In today's systems, fixing these issues requires human intervention — a developer manually rewrites the prompt, patches the tool, and redeploys.

Autogenesis asks: what if the agent could fix itself, safely, with full audit trail and rollback?

The paper identifies three critical gaps in current protocols:

- No lifecycle management — Agents can't create, update, or destroy their own components through standardised interfaces. Everything is hardcoded or managed through brittle glue code.

- No version lineage — When a prompt or tool gets modified, there's no record of what changed, why it changed, or how to undo it. A bad update can brick the entire system.

- No safe mutation interface — Self-modification is an all-or-nothing operation. There's no formal mechanism to propose a change, test it, and only commit it if performance actually improves.

How Does the Autogenesis Protocol Work?

The protocol introduces a two-layer architecture that cleanly decouples the evolutionary substrate from the evolutionary logic. This separation is the paper's key architectural insight — the same way a database separates storage from compute, Autogenesis separates what evolves from how evolution occurs.

Layer 1: RSPL (Resource Substrate Protocol Layer)

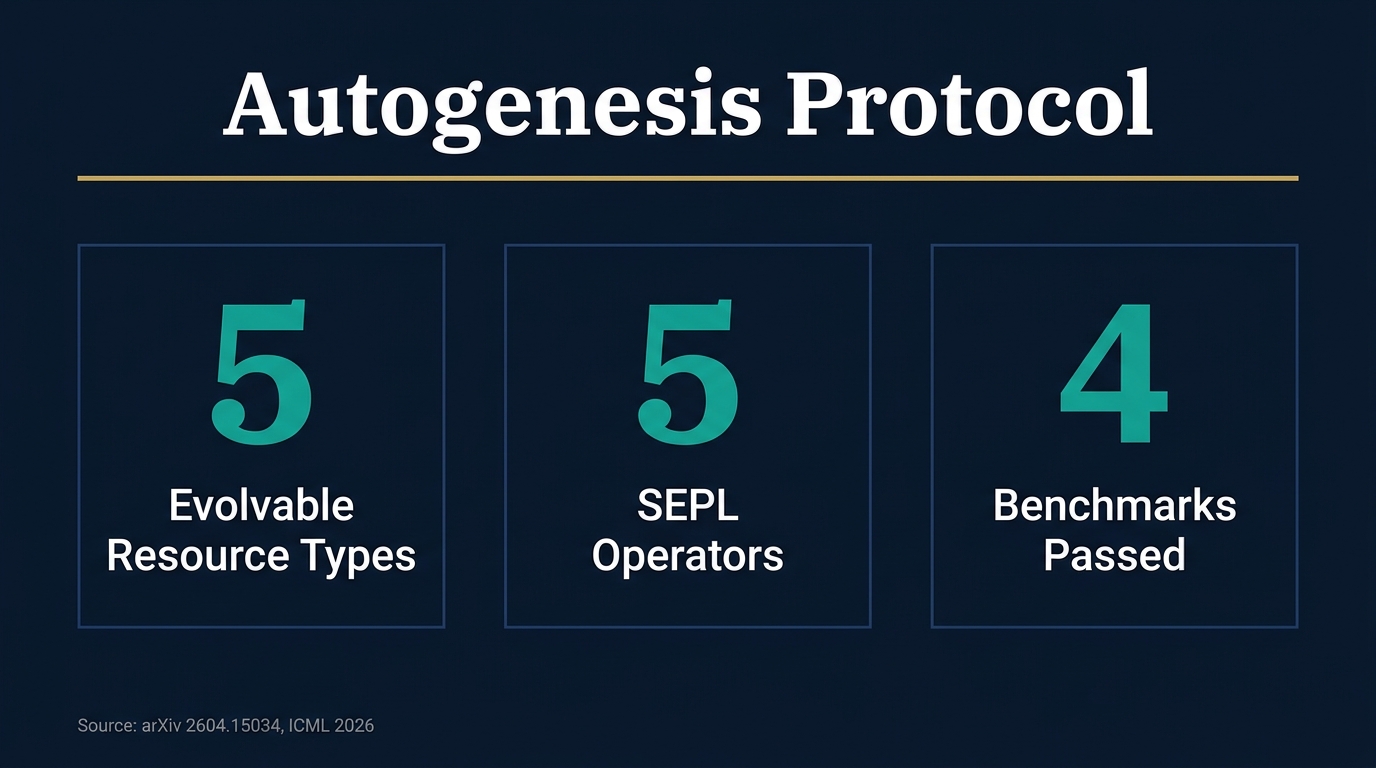

RSPL defines five types of resources that can evolve within an agent system:

- Prompts — The instructions given to agents (system prompts, task prompts, few-shot examples)

- Agents — Decision policies that determine how agents reason and act

- Tools — Actuation interfaces, including native scripts, MCP tools, and agent skills

- Environments — Task and world dynamics that define what agents interact with

- Memory — Persistent state that agents can read from and write to

Every resource is registered as a protocol-managed entity with four properties: a unique name, a description, an input-to-output mapping function, a "trainable" flag (can this resource be modified?), and metadata. Resources are deliberately passive — they contain no optimisation logic and cannot self-modify. All changes happen through controlled, interface-mediated operations.

Each resource also gets a registration record that includes: the resource entity itself, a version string (semantic versioning), an implementation descriptor (import path or source code), instantiation parameters, and exported representations (function-calling schemas, natural language descriptions) that LLMs use to interact with it.

RSPL also provides infrastructure services:

- Version Manager — Maintains version lineage for each resource, enabling rollback, branching, and diffing. Every version is an immutable snapshot.

- Dynamic Manager — Handles serialisation for hot-swapping resources at runtime without restarting the agent system.

- Tracer Module — Captures fine-grained execution traces (inputs, outputs, tool interactions, intermediate decisions) for debugging and as training signals.

- Model Manager — Unified model-API layer across providers (OpenAI, Anthropic, Google, OpenRouter) with routing and fallback support.

Layer 2: SEPL (Self-Evolution Protocol Layer)

SEPL is where the actual self-improvement happens. It defines a closed-loop optimisation process with five atomic operators:

1. Reflect (ρ) — Analyses execution traces to identify failure hypotheses. Think of it as computing a "semantic gradient" — mapping raw observability data to specific, causal explanations of what went wrong.

2. Select (σ) — Translates diagnostic hypotheses into concrete modification proposals. For example, "the prompt lacks edge-case handling" becomes "append constraint clause X to system prompt."

3. Improve (ι) — Applies the proposed modifications through RSPL's standardised interfaces to produce a candidate state.

4. Evaluate (ε) — Tests the candidate against objective criteria and safety invariants. This is the quality gate.

5. Commit (κ) — Only accepts the change if evaluation shows improvement AND all safety constraints are satisfied. Otherwise, rolls back cleanly with no side effects.

This loop runs iteratively. The key insight is that evolution is not a random walk — it's a directed trajectory grounded in execution data, traceable through versioned updates, and monotonically improving under safety constraints.

Autogenesis-Agent: The Working Implementation

The paper doesn't just propose a protocol — it builds a working system on top of it. The Autogenesis-Agent (AGS) uses an Agent Bus architecture where:

- An Orchestrator decomposes tasks into subtask plans (themselves registered as versioned RSPL resources)

- Sub-agents (researcher, browser-use agent, tool-calling agent, tool generator) execute concurrently via the bus

- Self-evolution triggers between task rounds — the SEPL loop runs, reflects on what happened, proposes improvements, and commits or rolls back

The system also supports an "agent-as-tool" composition pattern where sub-agents are wrapped behind standard RSPL tool schemas and invoked alongside conventional tools and MCP services.

Multiple Optimisation Strategies

The protocol is optimizer-agnostic — any procedure conforming to the five-operator interface works:

- Reflection Optimiser (default) — Uses natural language analysis to identify failures and propose fixes. The backbone LLM generates diagnostic hypotheses like "the sorting algorithm has O(n²) complexity on the critical path" and the system translates these into concrete modifications.

- TextGrad — Treats natural language feedback as "text gradients," analogous to backpropagation in neural networks, for iterative prompt and tool updates.

- GRPO / Reinforce++ — Reinforcement learning approaches that frame agent components as policies and use evaluation signals as rewards.

How Does This Compare to MCP and A2A?

The paper positions Autogenesis as a complementary layer above existing protocols, not a replacement. Here's how they compare:

MCP (Model Context Protocol) solves model-to-tool invocation. It standardises how an LLM discovers and calls external tools. But MCP treats tools as static — once registered, a tool's schema and implementation don't change. Autogenesis treats tools as evolvable resources with version lineage.

A2A (Agent-to-Agent) solves agent-to-agent communication. It defines message formats and handoff protocols. But A2A doesn't address what happens when an agent needs to modify its own behaviour based on accumulated experience. Autogenesis provides the mutation management layer.

The analogy the paper implies: if MCP is like TCP (transport) and A2A is like HTTP (application), then Autogenesis is like Git for agent components — versioned, auditable, rollbackable state management with a formal optimisation loop on top.

What Are the Experimental Results?

The paper evaluates Autogenesis-Agent on four challenging benchmarks:

- GPQA — Graduate-level science reasoning

- AIME — Mathematical problem solving

- GAIA — General AI assistant benchmark requiring tool use

- LeetCode — Programming challenges

Across all benchmarks, the system shows consistent improvements over strong baselines. The reflection-driven optimiser produces meaningful gains after just a few evolution cycles. The version lineage and rollback mechanisms work as advertised — failed evolution attempts are cleanly reversed without contaminating the agent's state.

Why Does This Matter for Production Agent Systems?

For teams building real agent deployments — whether customer service bots, coding assistants, or multi-agent orchestration platforms — Autogenesis addresses a genuine pain point. Today, maintaining agent systems is labour-intensive. Prompts drift, tools break, and every fix requires manual intervention.

The protocol's key practical contributions:

- Reduced maintenance burden — Agents can self-repair common issues without human intervention

- Auditability — Every change is tracked with full lineage, which matters for compliance-heavy industries

- Safe experimentation — The commit gate means agents can try improvements without risking system stability

- Optimizer flexibility — Teams can swap between reflection, gradient-based, and RL approaches without changing the underlying architecture

What Are the Limitations?

The paper acknowledges several open questions:

- The current evaluation focuses on benchmark performance rather than long-running production deployments

- The safety guarantees depend on the quality of the evaluation operator — if your evaluator doesn't catch a problem, the commit gate won't either

- The computational cost of running the evolution loop (especially reflection with large LLMs) isn't thoroughly analysed

- The protocol assumes a trusted execution environment — adversarial self-modification isn't addressed

How Could This Apply to OpenClaw and Similar Frameworks?

For agent frameworks like OpenClaw that manage skills, tools, and prompts as first-class resources, the Autogenesis protocol offers a blueprint for adding self-improvement capabilities. Specifically:

- Skill evolution — OpenClaw skills could be registered as RSPL resources, allowing agents to refine their own skill definitions based on usage patterns

- Prompt versioning — System prompts could be versioned and tested against performance metrics, with automatic rollback when quality degrades

- Tool refinement — Custom tools could be evolved based on failure patterns (timeout rates, error types, output quality scores)

- Cross-session learning — The tracer module's execution traces could feed into SEPL's reflect operator, enabling agents to improve between sessions rather than just within them

The "from prompt engineering to protocol engineering" framing is particularly relevant. As agent systems scale from prototypes to production, the manual prompt-tuning approach doesn't hold. A standardised evolution protocol could be the infrastructure layer that makes self-improving agents safe and manageable at scale.

Frequently Asked Questions

What is the Autogenesis Protocol? The Autogenesis Protocol (AGP) is a two-layer self-evolution protocol for AI agents. Layer 1 (RSPL) defines five types of evolvable resources — prompts, agents, tools, environments, and memory — as versioned, protocol-registered entities. Layer 2 (SEPL) defines a closed-loop optimisation process with five operators: Reflect, Select, Improve, Evaluate, and Commit. Every change is auditable and reversible.

How is Autogenesis different from MCP and A2A? MCP standardises model-to-tool invocation. A2A standardises agent-to-agent communication. Autogenesis standardises how agent components evolve over time. It's a complementary layer that adds lifecycle management, version tracking, and safe mutation interfaces that MCP and A2A don't provide.

Can AI agents really safely modify themselves? Autogenesis addresses this through the SEPL commit gate. Before any modification is accepted, the Evaluate operator tests the candidate against objective criteria and safety invariants. Only changes that demonstrably improve performance AND satisfy all safety constraints are committed. Failed attempts are cleanly rolled back with no side effects.

What benchmarks was Autogenesis tested on? The system was evaluated on GPQA (graduate science reasoning), AIME (mathematical problem solving), GAIA (general AI assistant with tool use), and LeetCode (programming challenges). It showed consistent improvements over strong baselines across all four benchmarks.

What optimisation strategies does Autogenesis support? The protocol supports multiple strategies through its standardised five-operator interface. The default is a Reflection Optimiser that uses natural language analysis. It also supports TextGrad (treating feedback as text gradients), GRPO, and Reinforce++ (reinforcement learning approaches).