Last Updated: May 6, 2026

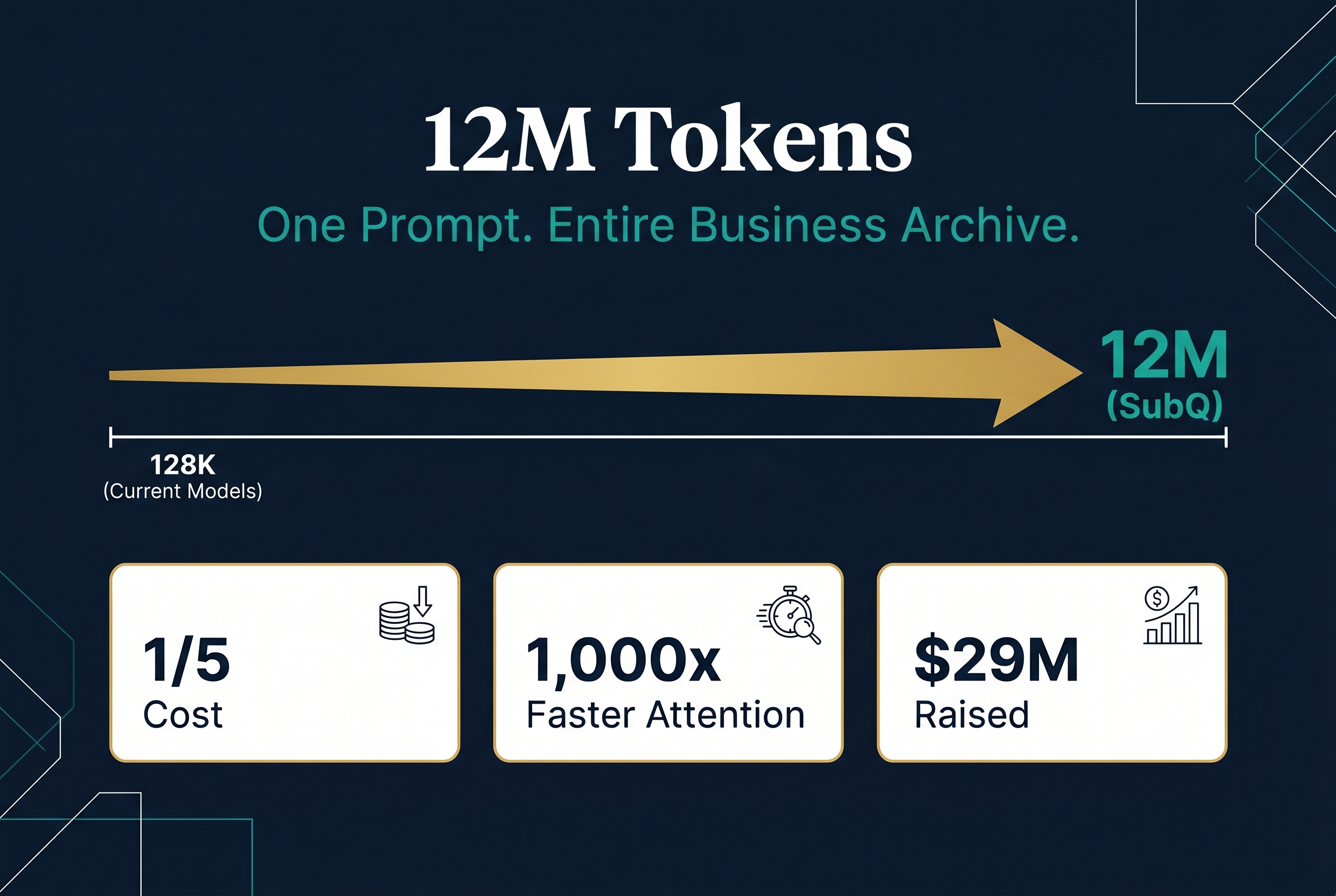

On May 5, 2026, a startup called Subquadratic emerged from stealth with a claim that could reshape how businesses use AI. Their model, SubQ, processes 12 million tokens in a single prompt. That is roughly 9 million words. An entire business archive. Years of emails. Full codebases. Complete contract histories. All loaded into the AI at once.

The company raised $29 million in seed funding from investors including Tinder co-founder Justin Mateen, former SoftBank Vision Fund partner Javier Villamilar, and early backers of Anthropic, OpenAI, and Stripe. The round values Subquadratic at $500 million.

But the funding is not the story. The architecture is.

What Makes SubQ Different From Other AI Models

SubQ is the first large language model built on a fully subquadratic architecture. Every major AI model today, from ChatGPT to Claude to Gemini, uses a mechanism called attention that compares every word against every other word. Double the input size and the compute cost quadruples. That is quadratic scaling, and it is why context windows have been stuck at 128,000 to 1 million tokens.

SubQ uses something called Subquadratic Sparse Attention (SSA). Instead of comparing every word to every other word, SSA learns which comparisons actually matter and only computes those. The result: compute scales linearly instead of quadratically. At 12 million tokens, this reduces attention compute by roughly 1,000 times compared to standard transformers.

The practical impact is significant. SubQ runs at 150 tokens per second and costs one-fifth of what leading frontier models charge. It scored 81.8% on SWE-Bench Verified (beating Claude Opus 4.6 at 80.8%), 95.0% on RULER at 128K tokens, and 65.9% on MRCR v2 at 1 million tokens.

The company launched three products into private beta: a developer API, a coding agent called SubQ Code that plugs into Claude Code and Cursor, and a free search tool called SubQ Search.

Why Context Windows Matter for Your Business

Most business owners have never heard the term "context window." But it silently determines what AI can and cannot do for your company.

A context window is the amount of text an AI model can read and reason over in a single interaction. Today's standard models handle about 128,000 tokens, roughly 96,000 words or a short book. Frontier models like Claude and Gemini stretch to 1 million tokens, about 750,000 words.

That sounds like a lot until you think about what your business actually generates in a year. A mid-size professional services firm produces hundreds of thousands of emails, project documents, client correspondence, proposals, and reports annually. A construction company accumulates tender documents, compliance records, safety logs, and contract variations across dozens of active sites. A healthcare practice generates patient records, referral letters, billing data, and treatment plans that must stay accurate and compliant.

None of that fits in a 128,000 token window. That is why AI tools today work in snippets. You ask a question, the AI searches for relevant chunks, and it answers based on a fraction of your data. The workarounds are expensive and brittle: retrieval pipelines, vector databases, chunking strategies, and multi-agent orchestration layers, all built to compensate for the fact that the model cannot simply read everything at once.

What 12 Million Tokens Actually Looks Like

The most important thing to understand about SubQ's 12 million token context window is scale. Here is what fits in a single prompt:

- Every email your business sent and received in the past year

- Your entire CRM history including notes, calls, and deal records

- Full codebases for multiple software projects

- Every contract, proposal, and tender document from the past three years

- Complete employee handbooks, policy documents, and compliance records

- All customer support transcripts and chat logs

- Financial reports, board papers, and investor updates

Previously, making AI work across that volume of data required complex engineering. You needed retrieval-augmented generation (RAG) systems, vector databases, chunking algorithms, and teams of developers to stitch it all together. SubQ's proposition is that you can skip most of that infrastructure and just load everything into the model.

For Australian businesses that have been told AI adoption requires significant technical investment, this could lower the barrier dramatically.

The Cost Shift: Why One-Fifth Matters

SubQ costs approximately one-fifth of what Claude Opus or GPT-5.5 charge for comparable workloads. At 150 tokens per second, it is also significantly faster than most frontier models on long-context tasks.

The cost reduction matters because token costs have been the silent killer of AI projects. A business processing 100,000 customer interactions per month through an AI agent at current frontier pricing could easily spend $10,000 to $50,000 per month on API costs alone. At one-fifth the price, that drops to $2,000 to $10,000. The difference between a pilot project and a production deployment.

For coding specifically, SubQ Code claims a 25% lower bill and 10x faster codebase exploration compared to existing tools. The plugin auto-redirects expensive model turns to SubQ's cheaper long-context layer. For development teams, this could meaningfully reduce the cost of AI-assisted coding.

What This Means for Different Australian Industries

Professional Services (Legal, Accounting, Consulting) Firms that bill by the hour spend enormous time reviewing documents, searching precedents, and synthesizing information across client files. A 12 million token context window means an AI agent could hold an entire client matter, years of correspondence, all relevant case law, and the firm's precedent database in a single session. The AI becomes a genuine research partner rather than a tool that can only see small slices at a time.

Construction and Engineering Tender responses require pulling information from dozens of past projects, capability statements, safety records, pricing matrices, and compliance documentation. Currently this means days of manual work or complex RAG systems. With 12M tokens, the full tender library loads into the model at once. An agent can draft responses that reference specific past projects, identify compliance gaps, and flag pricing anomalies, all from complete context.

Healthcare and Allied Health Patient records, treatment protocols, referral histories, and compliance documentation across years of care. The challenge has always been that no AI model could hold enough patient context to be genuinely useful without complex integration work. A large enough context window changes the calculus. An agent could reason across a patient's complete history, current treatment plan, and relevant clinical guidelines simultaneously.

Trades and Field Services Job histories, customer records, equipment maintenance logs, parts inventories, and scheduling data across hundreds of active clients. Instead of querying a database and feeding selected results to an AI, the entire operational history sits in context. Quotes become instant because the agent knows every past job, every part used, every price charged.

The Skepticism: What Business Owners Should Know

Subquadratic's claims are extraordinary, and the AI research community has responded with a mixture of genuine interest and pointed skepticism. VentureBeat reported that several researchers have demanded independent verification.

The key concerns:

- Only three benchmarks have been published, all chosen to highlight long-context strengths

- The full technical report has not been released

- Model weights are closed, preventing independent evaluation

- Prior subquadratic architectures like Mamba, RWKV, and DeepSeek Sparse Attention have repeatedly underperformed transformers at frontier scale

- General reasoning, math, multilingual, and safety benchmarks are absent

These are legitimate concerns. But for business owners evaluating AI tools, the question is not whether SubQ achieves its theoretical maximum. The question is whether a meaningfully larger context window at a meaningfully lower cost is available, even if the real-world performance lands somewhere between the marketing claims and today's status quo.

Even if SubQ delivers half of what it promises, a 6 million token context at two-fifths the cost of current models represents a genuine step change in what businesses can do with AI.

How Flowtivity Is Evaluating This for Clients

At Flowtivity, we track every meaningful development in AI architecture because our clients depend on us to recommend the right tools for their specific situation. Here is how we are thinking about SubQ:

Short term (next 3 months): SubQ is in private beta. We have requested early access and will benchmark it against Claude and GPT on real Australian business workloads, not synthetic benchmarks. Until we can validate performance on tasks like tender analysis, document review, and multi-step reasoning over long business documents, we are watching but not recommending.

Medium term (3 to 6 months): If independent benchmarks validate the efficiency claims, the cost reduction alone makes SubQ worth integrating into agent architectures. Even as a secondary model for long-context tasks while keeping frontier models for complex reasoning, the economics are compelling.

Long term (6 to 12 months): The architectural shift matters more than any single product. If subquadratic attention proves viable at scale, it will change how every AI model is built. Context windows will expand, costs will drop, and the complex RAG infrastructure that businesses currently pay for will simplify dramatically.

The Bigger Picture: AI Infrastructure Is Getting Cheaper and More Capable

SubQ is one signal in a broader trend. AI compute costs have been falling roughly 10x every 18 months while model capabilities continue to improve. The context window problem, long considered a hard physical constraint, is being attacked from multiple angles.

For Australian business owners, the practical takeaway is this: the AI tools you evaluate today will be significantly cheaper and more capable within a year. That does not mean you should wait. It means you should start building AI workflows now with today's tools, but architect them so they can swap in better models as they arrive.

The businesses that win with AI are not the ones that pick the perfect model. They are the ones that build the operational muscle to evaluate, integrate, and manage AI agents as the technology evolves. The model is a commodity. The management capability is the asset.

That is exactly what Flowtivity helps Australian businesses build. We handle the technical integration. You focus on training and managing agents that understand your domain. As models like SubQ come online, we plug them into your existing agent architecture so you always run on the best available technology without rebuilding from scratch.

If you want to explore what AI agents could look like in your business, reach out. We will build the system. You train it on what makes your business unique.

About the author: AJ Awan is the founder of Flowtivity, an Australian AI consultancy specializing in workflow automation and AI agent deployment for growing businesses. With 9+ years of consulting experience including 6 years at EY, AJ helps companies build AI agents that work within their existing systems and processes.