Last Updated: May 10, 2026

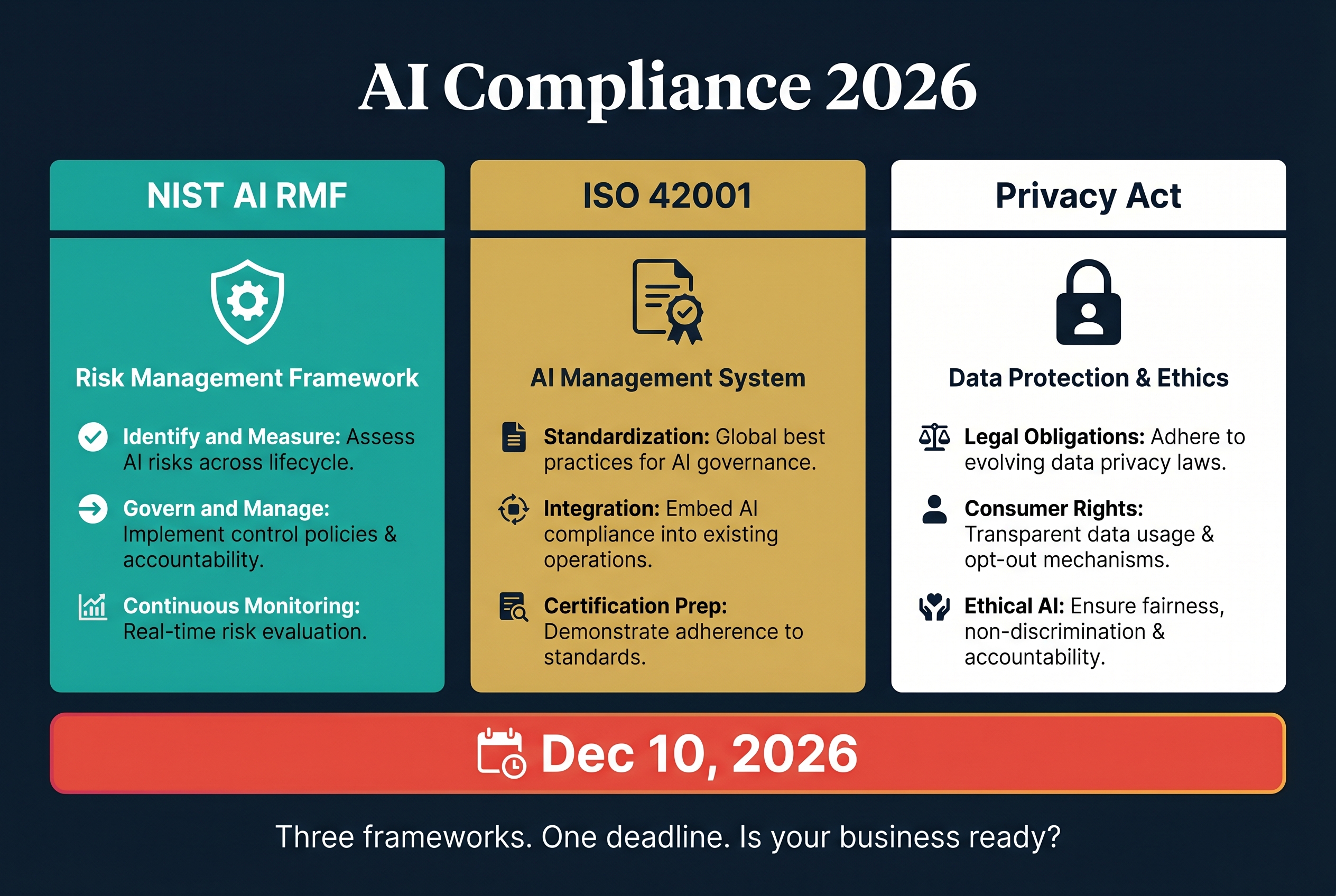

Australian businesses deploying AI agents face a regulatory tidal wave in 2026. Three frameworks are converging simultaneously: the NIST AI Risk Management Framework gaining traction as a global standard, ISO 42001 establishing the first certifiable AI management system, and the Australian Privacy Act amendments mandating automated decision-making disclosure by December 10, 2026.

The businesses that prepare now will treat compliance as a competitive advantage. The ones that ignore it will scramble to retrofit their AI systems under auditor pressure. This guide covers what each framework requires, how they overlap, and exactly what your business needs to do before the deadlines hit.

This is Part 1 of our 3-part series on AI compliance for Australian businesses. [Part 2 compares Microsoft Entra Agent ID vs Google Gemini Enterprise for agent security.] [Part 3 provides a practical architecture checklist for building audit-ready AI systems.]

What Is the NIST AI Risk Management Framework and Does It Apply in Australia?

The NIST AI Risk Management Framework (AI RMF) is a voluntary framework developed by the US National Institute of Standards and Technology. Released in January 2023 and continuously updated, it provides a structured approach to identifying, assessing, and managing risks from AI systems. On April 7, 2026, NIST released a concept note for an AI RMF Profile on Trustworthy AI in Critical Infrastructure, signalling deeper integration into regulated industries.

The key point for Australian businesses: although NIST is a US framework, it is becoming the global de facto standard for AI governance. Australian organisations that work with US clients, handle US data, or operate in sectors with international supply chains will increasingly be expected to demonstrate NIST alignment. More practically, NIST provides the clearest available guidance on what "responsible AI" actually looks like in operation.

The framework is built around four core functions. Govern establishes policies, roles, and accountability structures for AI risk management. Map identifies and categorises AI risks specific to your context and use cases. Measure assesses identified risks using quantitative and qualitative methods. Manage implements risk treatments including mitigation, transfer, and acceptance decisions.

NIST aligns naturally with ISO/IEC 42001, anchoring AI risk functions within a formal management system and complementing information security standards such as ISO 27001 and SOC 2. Organisations already certified to ISO 27001 will find the NIST AI RMF integrates cleanly into their existing governance structures.

What Is ISO 42001 and Why Should Australian Businesses Care?

ISO/IEC 42001 is the first international standard for AI management systems. Published in December 2023, it provides a certifiable framework for organisations to establish, implement, maintain, and continually improve an AI management system. Think of it as ISO 27001 but specifically for AI.

The most important thing about ISO 42001 is that it is certifiable. Unlike NIST, which is a voluntary framework, ISO 42001 allows organisations to undergo formal certification audits. This matters because certification provides tangible proof to clients, regulators, and partners that your AI governance meets an internationally recognised standard. For Australian consultancies and technology companies selling into enterprise or government, ISO 42001 certification will increasingly appear in tender requirements and vendor assessments.

ISO 42001 defines the "how" of AI governance: documented processes, defined roles, documented risk assessments, and audit evidence. NIST AI RMF provides the "what" in terms of specific risk management practices. The two work together. Organisations can embed NIST AI RMF risk functions within ISO 42001's management system structure, gaining both the operational guidance and the certification framework.

For Australian businesses in healthcare, professional services, and government-adjacent sectors, early ISO 42001 certification is a genuine differentiator. Most competitors will not have it. Demonstrating certified AI governance in a pitch or tender response signals maturity that buyers are actively looking for but rarely finding.

What Are the Australian Privacy Act Amendments for AI?

The Privacy and Other Legislation Amendment Act 2024 (Cth) introduces significant new obligations for organisations using automated decision-making, with the relevant provisions scheduled to commence on December 10, 2026.

The amendments are broadly cast to capture a range of technologies used to automate decision-making processes, including AI-enabled systems, rule-based tools, and automated assessment technologies. The key requirements:

Mandatory disclosure of automated decision-making. Organisations must specifically disclose in their privacy policies that they use automated decision-making. This cannot be generic boilerplate. The disclosure must provide genuinely meaningful information about what types of automated decisions are made, what data is used, and how individuals can seek review.

Transparency obligations. Individuals affected by automated decisions have enhanced rights to understand how and why a decision was made. This is not just about notifying someone that AI was involved. It requires the ability to explain the reasoning behind specific decisions.

Health data implications. For organisations handling health information (allied health practices, primary health networks, health consultancies), the stakes are higher. Health data is classified as sensitive information under the Privacy Act, and automated decision-making involving health data attracts additional scrutiny from the Office of the Australian Information Commissioner.

The December 2026 deadline sounds far away but it is not. Organisations need to audit their current AI and automated systems, identify which ones constitute "automated decision-making" under the new definitions, update privacy policies with meaningful disclosures, and potentially redesign systems to support the transparency requirements. That is months of work, not weeks.

How Do These Three Frameworks Work Together?

The three frameworks address different but overlapping aspects of AI governance. NIST AI RMF provides risk management methodology. ISO 42001 provides the certifiable management system. The Privacy Act amendments provide the legal compliance obligation specific to Australia.

The practical overlap works like this. All three require documented risk assessments of AI systems. All three require transparency about how AI systems make decisions. All three require human oversight mechanisms. All three require audit trails that can demonstrate compliance to external reviewers.

The smart approach is to build once and comply with all three simultaneously. An AI management system designed to meet ISO 42001 requirements, using NIST AI RMF risk methodology, and incorporating the Privacy Act disclosure obligations gives you comprehensive coverage without duplicating effort.

For Flowtivity's clients, we design architectures that satisfy all three frameworks from day one. This means building systems where every AI decision is logged, every automated process can be explained to an auditor, and human oversight is structurally embedded rather than bolted on as an afterthought.

What Does Human-in-the-Loop Actually Mean for AI Architecture?

Human-in-the-loop (HITL) is the concept that AI systems should not operate autonomously without meaningful human oversight. It sounds simple but the architectural implications are significant, especially when you need to prove HITL compliance to an auditor.

Circuit breakers are mechanisms that automatically pause or stop AI agent activity when certain conditions are triggered. These conditions might include the agent attempting to access data outside its authorised scope, the confidence level of an output falling below a threshold, the agent attempting an action classified as high-risk (such as sending external communications or modifying financial records), or the volume of automated actions exceeding a defined rate limit.

Circuit breakers must be architecturally enforced, not policy-based. An auditor will not accept "we told staff to review agent outputs" as evidence of HITL. They need to see that the system structurally prevents autonomous action in defined scenarios.

Immutable audit logs are records of AI agent activity that cannot be modified or deleted after creation. Every agent action, every input received, every output generated, every decision made, and every human intervention must be captured with timestamps, actor identifiers, and context data.

The immutability requirement is critical. If logs can be edited or deleted, they are not audit evidence. This means using append-only storage mechanisms, write-once databases, or blockchain-anchored log systems. For Flowtivity's architecture, we use append-only PostgreSQL tables with row-level security that prevents any user, including administrators, from modifying or deleting log entries.

Decision explainability means the ability to reconstruct why an AI agent made a specific decision. This requires logging not just the input and output but the intermediate reasoning steps, the data sources accessed, the rules or model outputs that influenced the decision, and any human interventions that modified the outcome.

For health sector clients, this is particularly important. If an AI agent recommends a course of action involving patient data, an auditor needs to see the complete chain of reasoning. Not just "the AI said X" but "the AI accessed records A, B, and C, applied rule D, considered constraint E, and produced recommendation X with confidence level Y."

What Australian Businesses Should Do Right Now

The December 2026 Privacy Act deadline is the hard constraint. Here is the practical preparation timeline:

May to August 2026: Audit your AI footprint. Identify every AI system, automated tool, and agent currently in use across your organisation. Document what data each system accesses, what decisions it makes, and whether those decisions affect individuals. This inventory is the foundation for everything else.

August to October 2026: Gap analysis against all three frameworks. For each system in your inventory, assess compliance against NIST AI RMF risk functions, ISO 42001 management system requirements, and Privacy Act disclosure obligations. Identify where you need to add audit logging, implement circuit breakers, update privacy policies, or redesign processes.

October to December 2026: Implement and document. Build the missing controls, update privacy policies with meaningful automated decision-making disclosures, establish governance roles, and create the documentation that auditors will expect to see.

Ongoing: Continuous compliance. AI governance is not a one-time project. ISO 42001 requires continual improvement. NIST emphasises ongoing risk monitoring. The Privacy Act obligations are permanent. Build governance into your operational rhythm, not just your compliance calendar.

For businesses that find this overwhelming, Flowtivity helps design and implement AI architectures that are compliant from the ground up. We handle the technical controls (audit logging, circuit breakers, data classification, access management) while you focus on the governance and policy elements that require domain expertise from your team.

The cost of preparing now is a fraction of the cost of retrofitting compliance under audit pressure. And for businesses selling into enterprise or government, early compliance is not just risk management. It is a sales advantage.

This is Part 1 of 3. Read [Part 2: Microsoft Entra Agent ID vs Google Gemini Enterprise] and [Part 3: Building Audit-Ready AI Architecture].

About the author: AJ Awan is the founder of Flowtivity, an Australian AI consultancy specializing in workflow automation and AI agent deployment for growing businesses. With 9+ years of consulting experience including 6 years at EY, AJ helps companies build AI agents that comply with regulatory requirements while delivering operational value.