Last Updated: April 21, 2026

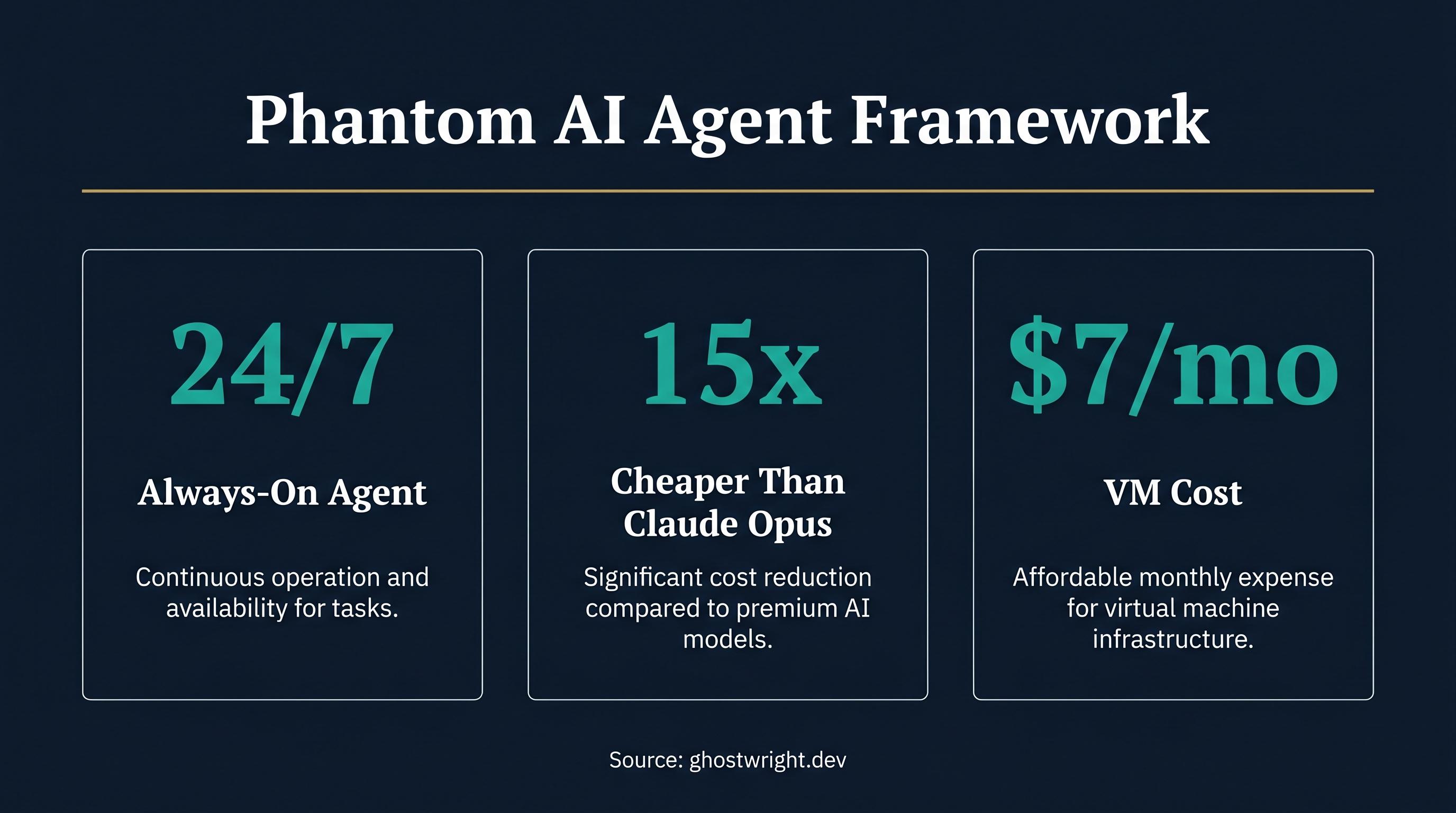

An AI agent that remembers your conversations from last week, builds its own tools on the fly, and runs 24/7 on its own dedicated machine. That's Phantom, a new open-source agent framework from Ghostwright that takes a radically different approach to AI in the workplace.

What Is Phantom by Ghostwright?

Phantom is an open-source AI agent framework built on the Claude Agent SDK that gives an AI its own dedicated computer to work on. Unlike traditional chatbots where you open a tab, ask a question, and lose all context when you close it, Phantom runs continuously on its own virtual machine. It installs software, spins up databases, builds dashboards, creates tools it didn't ship with, and remembers what you told it last week. The entire project is open source under a permissive license and can be self-hosted on any standard VM for $7-20 per month.

The core idea is simple but powerful. Most AI assistants share your laptop's resources, forget everything between sessions, and can only use the tools someone pre-built for them. Phantom flips that model. The agent gets its own isolated workspace, its own public domain for sharing what it builds, and the ability to create new capabilities at runtime that persist across restarts.

Why Should Businesses Care About AI Agents With Their Own Computer?

The distinction of giving an AI its own machine matters for three practical reasons. First, availability. Your laptop closes at 6pm. A cloud VM stays on. Phantom can run scheduled reports overnight, monitor competitor websites every two minutes, and send you a morning briefing before you've had coffee. Second, sharing. When an AI builds a dashboard on your laptop, only you can see it. When Phantom builds one on its VM, it gets a public URL with authentication that you can share with your team, your manager, or your clients. Third, isolation. The agent installs packages, runs databases, and executes code on its own machine, not yours. Your filesystem stays clean.

For a business running reporting, data pipelines, or automated monitoring, this is the difference between an AI that helps you do work and an AI that does work independently.

How Does Phantom's Self-Evolution Work?

Phantom's self-evolution system is arguably its most interesting technical feature. After every conversation session, the agent reflects on what happened, proposes changes to its own configuration, and then runs those proposed changes through a separate LLM judge for validation. This cross-model validation is critical because it prevents the agent from drifting into confabulation or self-reinforcing errors.

Every version of the agent's configuration is stored and versioned, so you can roll back to any previous state. The practical upshot is that a Phantom on Day 1 is a generic assistant. A Phantom on Day 30 knows your codebase conventions, your deployment pipeline, your client communication style, and the fact that your biggest account always asks about uptime before renewal. The agent gets measurably better at your specific job without you explicitly training it.

This addresses one of the biggest unsolved problems in production AI agents: context drift over long time horizons. Most agents either forget everything between sessions or accumulate so much unstructured context that they become unreliable. Phantom's structured evolution with cross-model validation is a genuine technical contribution to this problem.

What Makes Phantom Different From Other AI Agent Frameworks?

Persistent three-tier vector memory. Phantom uses Qdrant-backed vector storage with three tiers of memory. Mention a preference on Monday and the agent uses it on Wednesday without you repeating yourself. This is fundamentally different from session-based context windows that reset every conversation.

Dynamic MCP tool creation. The Model Context Protocol (MCP) is emerging as the standard for AI tool interoperability. Phantom doesn't just use pre-built MCP tools. It creates new ones at runtime, registers them in its MCP server, and those tools survive restarts. In one documented case, a Phantom built its own Slack messaging tool, registered it, and retired the workaround it had been using. Other Claude Code instances connecting to the same Phantom can also use these dynamically created tools.

Multi-provider support. Phantom ships with seven AI backend providers: Anthropic (default), Z.AI with GLM-5.1 for roughly 15x cheaper inference, OpenRouter for 100+ models through one key, Ollama for local GPU inference at zero API cost, vLLM, LiteLLM, and any custom Anthropic Messages API compatible endpoint. Switching providers is two lines of YAML. This matters for businesses that want to control costs by using cheaper models for routine tasks while reserving expensive ones for complex reasoning.

Encrypted credentials with magic-link auth. Rather than storing API keys and tokens in plain text config files, Phantom uses AES-256-GCM encryption and collects credentials through secure forms with magic-link authentication. This is a meaningful security improvement over most agent frameworks.

Built-in communication channels. Phantom ships with Slack, Telegram, email, and a web chat interface with SSE streaming and Web Push notifications. It doesn't ship with Discord, but in a documented case, when asked about Discord support, the agent explained the Discord Bot API, walked the user through creating a Discord application, provided a magic link for token submission, and then spun up the integration itself. The agent literally built a communication channel it wasn't designed with.

What Has Phantom Actually Built in Production?

The GitHub repository documents several production examples that are worth examining because they demonstrate capabilities beyond typical AI chatbot output:

ClickHouse analytics stack. A Phantom was asked to help with data analysis. It independently installed ClickHouse on its own VM, downloaded the full Hacker News dataset (28.7 million rows spanning 2007-2021), loaded the data, built an interactive analytics dashboard with charts, and created a REST API to query it. Then it registered that API as an MCP tool so future sessions and other connected agents could query it. Nobody asked it to build the entire stack. It identified analytics as useful and built it.

Self-monitoring with Vigil integration. A Phantom discovered Vigil, a lightweight open-source system monitor with only 3 GitHub stars. It understood what Vigil does, integrated it into its existing ClickHouse instance, built a sync pipeline that batch-transfers metrics every 30 seconds, and created a real-time monitoring dashboard showing service health, Docker container status, network I/O, disk I/O, system load, and data pipeline health. That's 890,450 rows across 25 metrics, auto-refreshing. The agent built observability for itself from a project almost nobody uses.

Discord integration from scratch. The agent honestly said it couldn't do Discord, then built the entire integration on the spot, including walking the user through the setup process and automatically spinning up the container once the token was submitted.

What Are the Security Considerations?

Phantom's architecture involves a deliberate security trade-off that businesses should understand before deploying. The Docker Compose configuration mounts the Docker socket into the Phantom container, which allows it to spawn sibling containers for sandboxed code execution. This socket grants root-equivalent access to the Docker daemon. A compromised Phantom process could create, modify, or destroy any container on the host.

The recommended mitigation is straightforward: run Phantom on a dedicated VM, not your personal workstation or a shared production server. The $7-20/month VM cost is essentially your security boundary. The credentials are encrypted with AES-256-GCM rather than stored in plain text, and the magic-link authentication for credential collection is a thoughtful security measure. The code is fully open source and auditable.

For businesses, the key takeaway is that Phantom's power comes from giving the agent real system access. Treat it like you would any privileged service account: dedicated infrastructure, minimal network exposure, regular audits.

What Are the Practical Business Use Cases?

Phantom shines in scenarios where you need an AI that works continuously, builds shareable outputs, and gets better over time:

Automated reporting and monitoring. Phantom can pull from your APIs on a schedule, compile reports, and email them to stakeholders from its own email address. Every Friday at 5pm, your team leads get a summary of open issues without anyone writing it.

Data exploration and analysis. Load a dataset and ask questions in plain English. Phantom creates a queryable environment on its VM, translates your questions to SQL, and serves results through a web interface you can share.

Competitive intelligence. Monitor competitor websites for changes on a configurable schedule. Phantom checks, detects changes, and notifies you through Slack or email. No manual checking required.

Shareable dashboards. Build PR velocity trackers, sales pipeline views, or operational metrics dashboards. Phantom serves them on a public URL with auth. Your team bookmarks it and sees live data.

Custom tool creation. Need an MCP tool that queries your internal API? Describe it once. Phantom builds it, registers it, and any Claude Code instance connected to your Phantom can use it immediately.

Onboarding and knowledge management. Phantom reads your codebase, remembers your conventions, and can give new team members specific answers about your architecture instead of generic boilerplate.

What Does It Cost to Run Phantom?

Phantom itself is free and open source. The costs break down into three components:

VM hosting: $7-20 per month for a dedicated cloud VM. This is the agent's computer.

AI model API costs: Depends on your chosen provider. Anthropic Claude ranges from $0.25 to $15 per million tokens depending on the model. Z.AI GLM-5.1 is roughly 15x cheaper than Claude Opus for comparable coding quality. Ollama on your own GPU is free.

Infrastructure: Qdrant for vector memory runs on the same VM. No additional database costs unless you outgrow the VM.

For a business already paying for AI API access, adding a $10/month VM to get persistent memory, self-evolution, and 24/7 availability is a remarkably small incremental cost.

How Does Phantom Compare to Running Claude Code or Similar Tools?

Claude Code, Cursor, and similar tools are excellent for interactive development work on your own machine. Phantom serves a different purpose. It's not meant to replace your IDE assistant. It's meant to be a persistent, autonomous worker that handles tasks you don't want to think about.

The key differences are continuity and sharing. Claude Code starts fresh each session. Phantom carries forward weeks of context. Claude Code builds on your localhost. Phantom builds on a public URL. Claude Code uses the tools it ships with. Phantom creates new ones.

For businesses, the ideal setup might be both. Use Claude Code or Cursor for active development. Use Phantom for monitoring, reporting, scheduled tasks, and knowledge accumulation.

Should Your Business Adopt Phantom?

Phantom is best suited for teams that have at least one person comfortable with Docker and basic cloud infrastructure, and that have recurring tasks involving data, monitoring, reporting, or research. If your team is currently manually checking competitor sites, building weekly reports by hand, or re-explaining context to AI assistants every morning, Phantom directly addresses those pain points.

The project is relatively new (first public release in early 2026) but the codebase is well-structured, fully open source, and the self-evolution mechanism with cross-model validation is a genuine technical innovation. The 1,819 tests passing and the documented production examples suggest this is a serious project, not a weekend prototype.

For Australian businesses exploring AI automation, Phantom represents an interesting middle ground between simple chatbot interfaces and fully custom agent development. You get a self-improving, always-on AI worker for the cost of a small cloud VM and your existing API keys.