Last Updated: April 25, 2026

In April 2026, Uber's CTO Praveen Neppalli Naga confirmed something that sent shockwaves through the tech industry: the company had burned through its entire 2026 AI budget in just four months. The primary culprit was Claude Code, Anthropic's agentic coding assistant. Thousands of developers across hundreds of teams, running around the clock on enterprise codebases, generating tokens at a pace that no annual budget cycle could absorb.

If Uber, one of the most operationally disciplined technology companies on the planet, could not contain AI agent spending, what hope does a growing business have?

The answer might be a 27-billion-parameter open-source model from Alibaba that just changed the economics of autonomous AI forever.

What Makes Qwen 3.6-27B Special for Autonomous Agents?

Qwen 3.6-27B, released by Alibaba's Qwen team in April 2026, is a dense open-weight language model that achieves something remarkable: it outperforms the previous-generation Qwen 3.5-397B flagship (a 397-billion-parameter mixture-of-experts model) across every major coding and agentic benchmark.

The key numbers that matter for autonomous agent fleets:

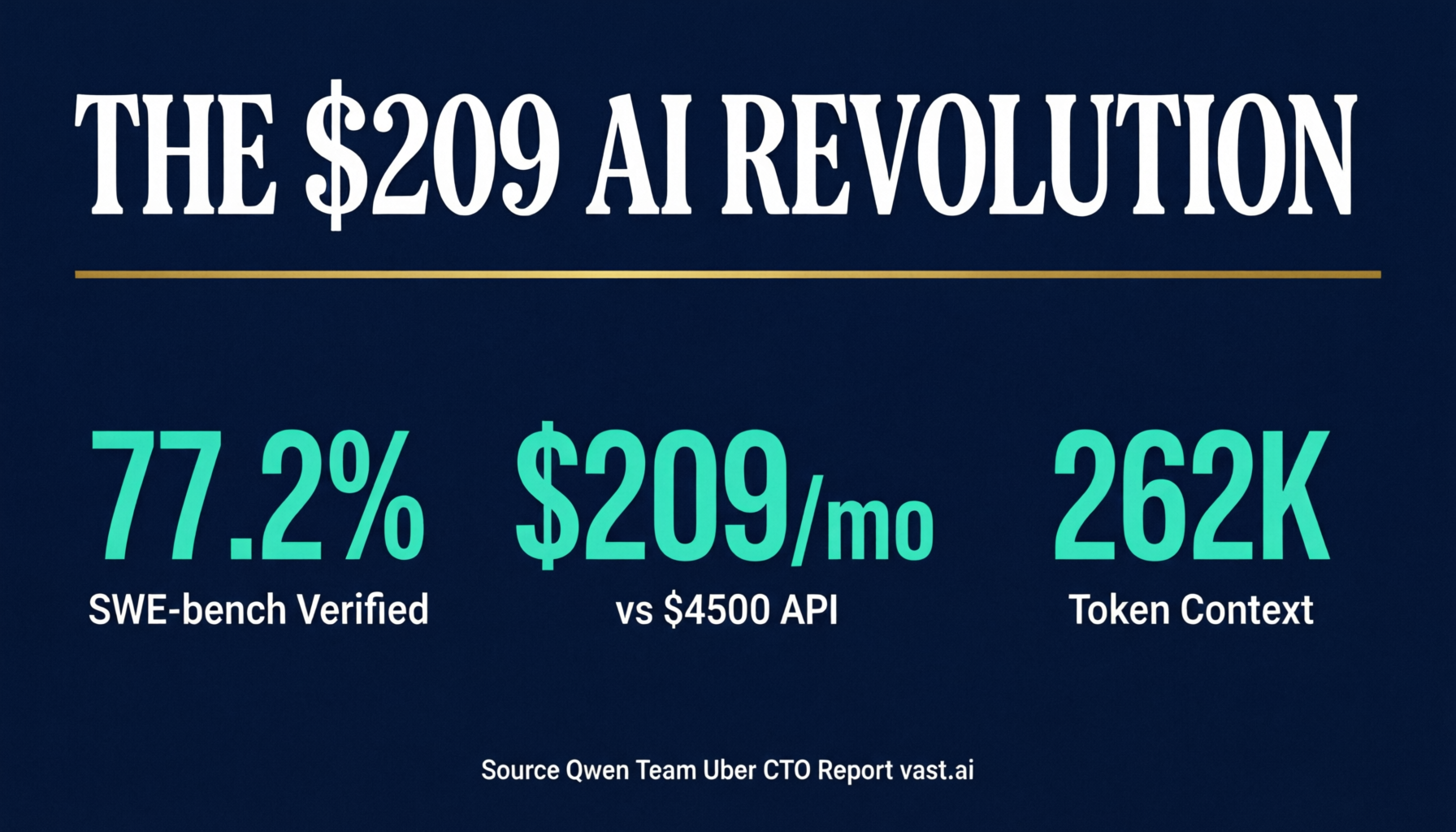

- SWE-bench Verified: 77.2% (up from 75.0% on Qwen 3.5-27B, beating the 397B MoE's 76.2%)

- Terminal-Bench: 59.3% (vs 52.5% on the previous generation)

- SkillsBench: 48.2% (vs 30.0% — a 60% improvement in agent skill execution)

- NL2Repo: 36.2% (vs 27.3% — repository-level code generation)

- Context window: 262,144 tokens natively, extensible to 1,010,000 tokens

- Runs on a single consumer GPU with 24GB+ VRAM (RTX 4090 or RTX 5090)

The SWE-bench score is the headline number. SWE-bench Verified is the community standard for autonomous software engineering. It measures whether an agent can receive a GitHub issue, navigate an unfamiliar codebase, identify the relevant code, write a fix, and pass the existing test suite. A 77.2% score from a model that fits on a single GPU is extraordinary. For context, GPT-4o scores approximately 55% and the original Claude 3.5 Sonnet scored around 49%.

But the SkillsBench improvement is arguably more important for agent fleets. SkillsBench measures how well a model executes multi-step tool-use sequences, which is exactly what autonomous agents do all day. A 60% improvement in skill execution means fewer retries, fewer errors, and more tasks completed on the first attempt.

The Cost Problem: Why Agent Fleets Are Bleeding Companies Dry

The Uber situation is not an outlier. It is the logical endpoint of consumption-based AI pricing applied to autonomous agents.

Here is the math that kills budgets. A single OpenClaw agent running Claude Sonnet 4 in a typical business configuration (email triage, calendar management, lead follow-ups, content creation, daily reporting) generates roughly 900 million tokens per month. At Claude Sonnet pricing of $3/$15 per million tokens, that is approximately $4,500 per month for one agent. One.

Scale that to a fleet of 5 agents across different business functions and you are looking at $22,500 per month. Scale to Uber's thousands of developers and the numbers become astronomical.

OpenClaw's own pricing guides confirm this. Light personal users spend $6-13 per month with budget models. But heavy automation setups with premium models push $100-420 per month per agent. These are not enterprise deployments with thousands of agents. These are small businesses running a handful of automated workflows.

The consumption model is genuinely hard to forecast at scale because autonomous agents do not behave predictably. A coding agent debugging a complex issue might burn through 500,000 tokens in a single session. An agent managing a sales pipeline might use 50,000 tokens one day and 2 million the next when it hits a complex decision tree. You cannot budget for variance like that on a per-token pricing model.

The Qwen 3.6-27B Solution: Fixed Cost Agent Infrastructure

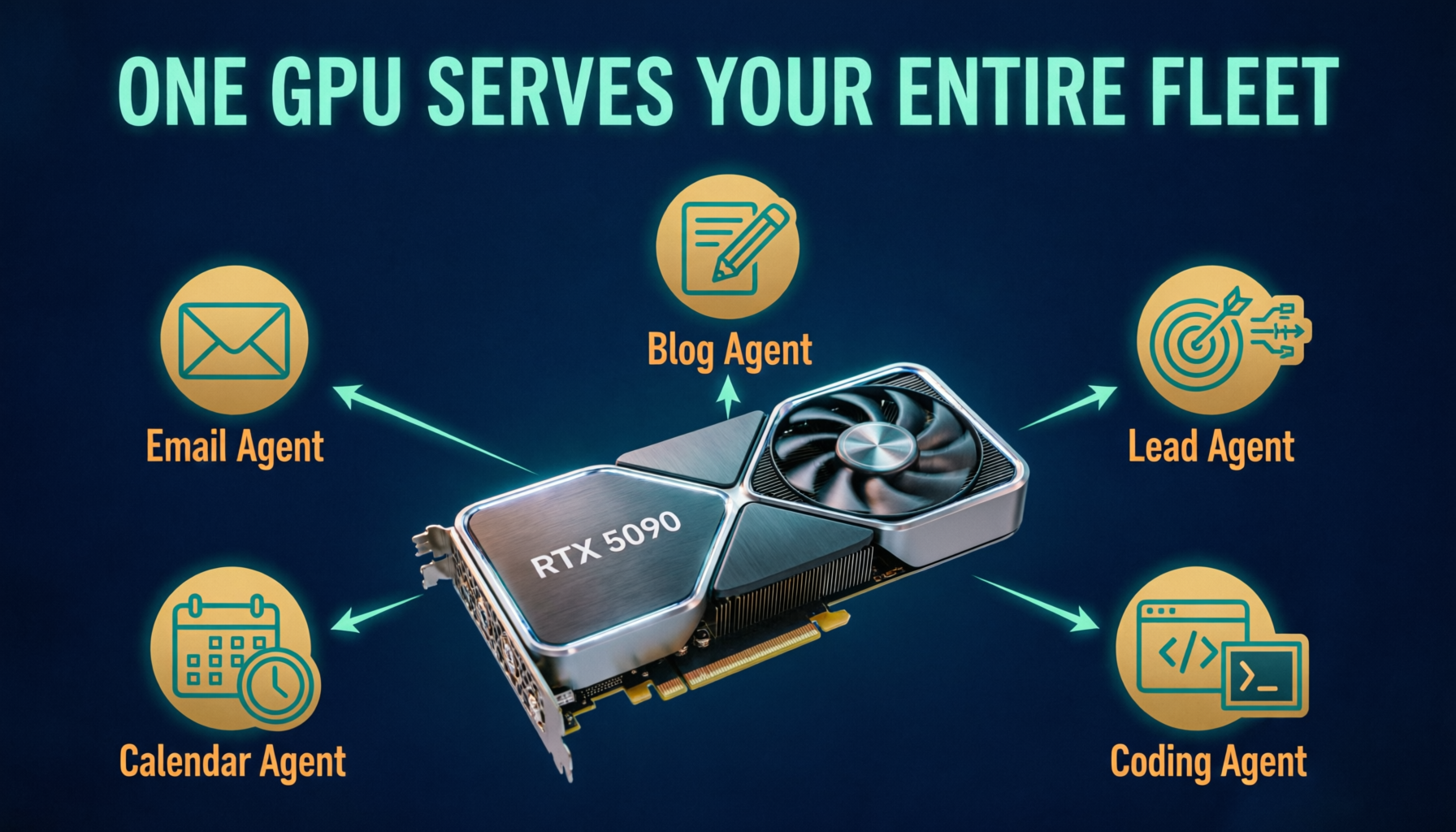

This is where Qwen 3.6-27B changes the game entirely. Instead of paying per token, you run the model on your own hardware for a fixed monthly cost.

Option 1: Cloud GPU (vast.ai)

- RTX 4090 instance: approximately $209 per month (24/7)

- RTX 5090 instance: approximately $300 per month (24/7)

- Serves 6-8 concurrent heavy agent sessions

- Cost per million tokens: approximately $0.03 (vs $3-15 via API)

Option 2: Own your hardware

- RTX 5090 workstation (e.g., Radium Onyx LVL 15): $8,999 AUD one-time

- Running costs: approximately $30-40 per month in electricity

- After 4 months, cheaper than a single Claude-powered agent

- Serves your entire agent fleet forever

The comparison that matters:

| Setup | Monthly Cost | Agents Supported | Tokens/Month | Cost Per Million |

|---|---|---|---|---|

| Claude Sonnet (1 agent) | ~$4,500 | 1 | 900M | ~$5.00 |

| GPT-4o (1 agent) | ~$1,800 | 1 | 900M | ~$2.00 |

| Qwen 3.6-27B on vast.ai | ~$209 | 6-8 | 5-8 Billion | ~$0.03 |

| Qwen 3.6-27B on owned RTX 5090 | ~$35 | 6-8 | Unlimited | ~$0.00 |

One RTX 5090 running Qwen 3.6-27B serves an entire small business agent fleet for the cost of electricity.

The model is 77.2% capable on SWE-bench. It handles tool calling. It manages multi-step workflows. It processes 262,000 tokens of context. And it costs effectively nothing to run after the hardware purchase.

The model is 77.2% capable on SWE-bench. It handles tool calling. It manages multi-step workflows. It processes 262,000 tokens of context. And it costs effectively nothing to run after the hardware purchase.

Why Agent Quality Matters More Than Model Size

There is a natural assumption that bigger models are better for autonomous agents. That assumption is wrong.

What makes an agent successful is not the raw intelligence of the underlying model. It is the model's ability to reliably execute tool-use sequences, follow multi-step instructions, recover from errors, and stay within the guardrails of its assigned role.

Qwen 3.6-27B's SkillsBench improvement (48.2% vs 30.0% on the previous generation) is the metric that matters most for agent fleets. This measures exactly the capability that autonomous agents need: executing complex tool sequences without getting confused, stuck, or hallucinating.

A smaller, highly capable dense model often outperforms a larger mixture-of-experts model for agent work because:

- Dense models have more consistent latency (no expert routing overhead)

- Tool calling is more reliable when the model has full access to all parameters

- Context window utilization is more predictable

- Memory requirements are fixed and known in advance

The Qwen team's achievement is proving that a well-trained 27B dense model can match or exceed a 397B MoE model on the specific benchmarks that matter for autonomous agents. That is not a marginal improvement. That is a paradigm shift in how we think about agent infrastructure.

How to Run Qwen 3.6-27B With OpenClaw

For businesses already running OpenClaw agents on expensive API models, the migration path is straightforward.

Step 1: Deploy the model. Use vLLM (the standard inference server) on either a vast.ai cloud GPU or your own hardware. A single command launches the model with an OpenAI-compatible API endpoint.

Step 2: Configure OpenClaw. Add the Qwen model as a provider in your openclaw.json configuration file, pointing to your vLLM endpoint. Set it as the primary model with your existing API model as a fallback for complex tasks.

Step 3: Route traffic intelligently. Use Qwen 3.6-27B for your daily agent workloads (email, scheduling, content, lead management, reporting). Route only the most complex reasoning tasks to premium API models. In practice, 90%+ of agent tasks do not require a frontier model.

Step 4: Monitor and optimize. Track which tasks succeed on Qwen vs which need the API fallback. Over time, as the model improves and you fine-tune your prompts, the API fallback percentage shrinks toward zero.

The beauty of this approach is that you do not abandon API models entirely. You use them surgically for the tasks that genuinely need frontier capability, while Qwen handles the daily work that currently generates most of your token spend.

What About DeepSeek V4 Flash?

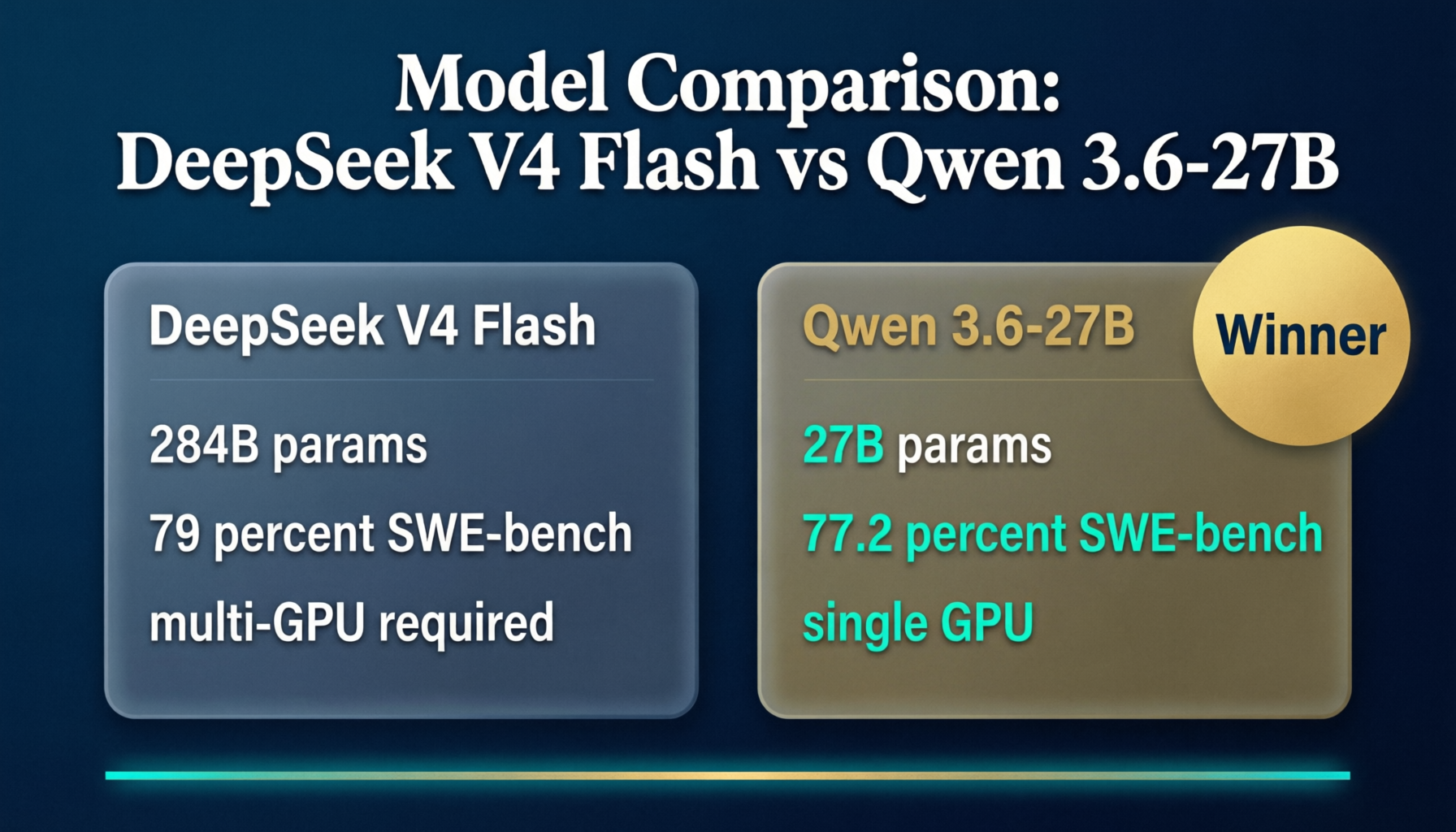

DeepSeek V4 Flash dropped the same week as Qwen 3.6-27B, and it is tempting to compare them. V4 Flash is a Mixture-of-Experts model with 284 billion total parameters (13 billion active per token), a native 1 million token context window, and scores 79.0% on SWE-bench Verified. On paper, it outperforms Qwen 3.6-27B.

But the hardware reality tells a different story.

The problem is that V4 Flash's 284 billion total parameters need to be stored somewhere, even though only 13 billion are active per token. Running just the active experts on a single RTX 5090 at Q4 quantization technically works (around 22-26GB VRAM), but performance drops to 5-15 tokens per second because the model must constantly load and switch between expert weights that do not fit in VRAM. That is fine for interactive chatting with one user. It is not fine for an agent fleet that needs consistent throughput.

To run V4 Flash properly with all experts resident in GPU memory, you need approximately 142GB of VRAM at Q4 quantization. That means 2x H100 80GB or 4x A100 GPUs, costing $1,500 to $3,000 per month on vast.ai. That is 7 to 14 times more expensive than running Qwen 3.6-27B on a single GPU.

Here is the head-to-head:

- SWE-bench Verified: Qwen 77.2% vs V4 Flash 79.0% (1.8 point gap)

- Minimum GPU: Qwen needs 1x RTX 4090 (24GB) vs V4 Flash needs 1x RTX 5090 (32GB) for degraded single-GPU mode, or multi-GPU for proper performance

- Monthly cost: Qwen at $209/month vs V4 Flash at $1,500-3,000/month for full performance

- Throughput: Qwen at 80-170 tok/s vs V4 Flash at 5-15 tok/s (single GPU) or 40-60 tok/s (multi-GPU)

- Context window: Qwen at 262K (extensible to 1M) vs V4 Flash at 1M native

The 1.8 point SWE-bench advantage does not justify 7x the infrastructure cost for most agent workloads. Qwen 3.6-27B is a dense model, meaning all parameters are always loaded in VRAM with no expert switching overhead. That translates to consistent, predictable latency, which is exactly what an agent fleet needs. MoE models like V4 Flash have variable per-token latency depending on which experts activate, making throughput less predictable under load.

The smart play: run Qwen 3.6-27B on your own GPU for the fleet, and use the DeepSeek V4 Flash API at $0.14 per million tokens for the rare tasks that genuinely need a 1 million token context window or frontier-level reasoning. Most months, that API bill stays under $20.

The Data Sovereignty Bonus

Running your own model on your own hardware solves a problem that API-only setups cannot: data sovereignty.

Every prompt you send to Claude, GPT, or Gemini leaves your infrastructure. For businesses in healthcare, legal, financial services, and government, this is a dealbreaker. You literally cannot use cloud AI for certain types of client work.

With Qwen 3.6-27B on your own hardware, your prompts, your client data, and your agent interactions never leave your building. For Australian businesses subject to the Privacy Act 1988 and the Australian Privacy Principles, this is not a nice-to-have. It is a compliance requirement for certain data categories.

This is also the sell for agent fleet providers. If you are building and managing OpenClaw agent fleets for clients, offering a self-hosted Qwen option means you can serve industries that cannot use cloud AI. Healthcare practices, legal firms, financial advisors, government contractors. These are the clients with the highest willingness to pay and the strongest need for data sovereignty.

The Hardware Reality in Australia

For Australian businesses, the hardware path is particularly attractive because there is no peer-to-peer GPU marketplace operating locally. Vast.ai, RunPod, and Lambda Labs are all US or EU only. But you can buy an RTX 5090 workstation from Australian builders like Radium PCs (the Onyx LVL 15 at $8,999 AUD) or Aftershock PC (the Novacore LVL 11 at $12,995 AUD with 64GB RAM).

The RTX 5090 gives you 32GB VRAM, which runs Qwen 3.6-27B at full BF16 precision with no quantization needed. That means you get the full quality of the model at approximately 130-170 tokens per second. Fast enough for real-time agent interactions.

For businesses that prefer not to manage hardware, vast.ai's US West Coast instances add about 150ms of latency from Australia. For LLM inference, where you are already waiting 1-3 seconds for token generation, that extra 150ms is imperceptible. The vast.ai path costs approximately $209 per month for an RTX 4090 running 24/7.

The Future: Why This Gets Better Every Quarter

The AI hardware market is entering a period of rapid price competition. Intel's Arc Pro B60 Dual offers 48GB VRAM for $1,299 AUD. Apple's Mac Studio M5 Ultra (expected June 2026) will offer 256GB unified memory. NVIDIA's RTX 6090 (expected H1 2027) will likely ship with 48GB+ VRAM at the flagship tier.

Meanwhile, open-source models are improving faster than proprietary ones on agentic benchmarks. Qwen 3.6-27B is already competitive with Claude Sonnet on SWE-bench. The next generation will close the gap further.

The convergence of cheaper hardware and better open-source models means that running your own agent fleet will be dramatically cheaper in 12 months than it is today. But the businesses that start building their self-hosted infrastructure now will have a year of learning, optimization, and cost savings that late adopters cannot replicate.

The Bottom Line for Builders and Business Owners

If you are running OpenClaw agents on API models and watching your token spend climb every month, Qwen 3.6-27B is your exit ramp. It is not a compromise. It is a 77.2% SWE-bench model that runs on consumer hardware for the cost of electricity.

The Uber budget blowout is not a cautionary tale about AI agents. It is a cautionary tale about paying per token for agents that run 24/7. The solution is not fewer agents. The solution is cheaper agents. And Qwen 3.6-27B just made that possible.