Last Updated: April 2026

Most Australian businesses using AI today are barely scratching the surface. They have ChatGPT open in a browser tab, maybe a custom prompt or two saved in a notes file. That is the starting line, not the finish.

The real journey runs from simple prompts through custom agents all the way to what the industry is now calling "super agents": AI systems that can spin up their own sandboxes, write and execute code, manage multi-step workflows, and operate with genuine autonomy.

This guide walks through every stage of that journey, with practical advice for Australian businesses ready to move beyond basic chat.

What Is a Super Agent in AI?

A super agent is an AI system that operates with significant autonomy. Unlike a basic chatbot that responds to one prompt at a time, a super agent can plan multi-step tasks, write and run its own code inside isolated sandbox environments, connect to external tools and APIs, recover from errors without human intervention, and work on long-running projects that span hours or days.

The key distinction is the sandbox. When an agent can spin up its own execution environment, install packages, run scripts, inspect the results, and iterate, it crosses a threshold from "helpful assistant" to "capable worker." That is the super agent territory.

Think of it this way: a basic agent answers questions. A custom agent follows instructions and uses tools. A super agent builds solutions.

How Did We Get Here? The Evolution of AI Agents

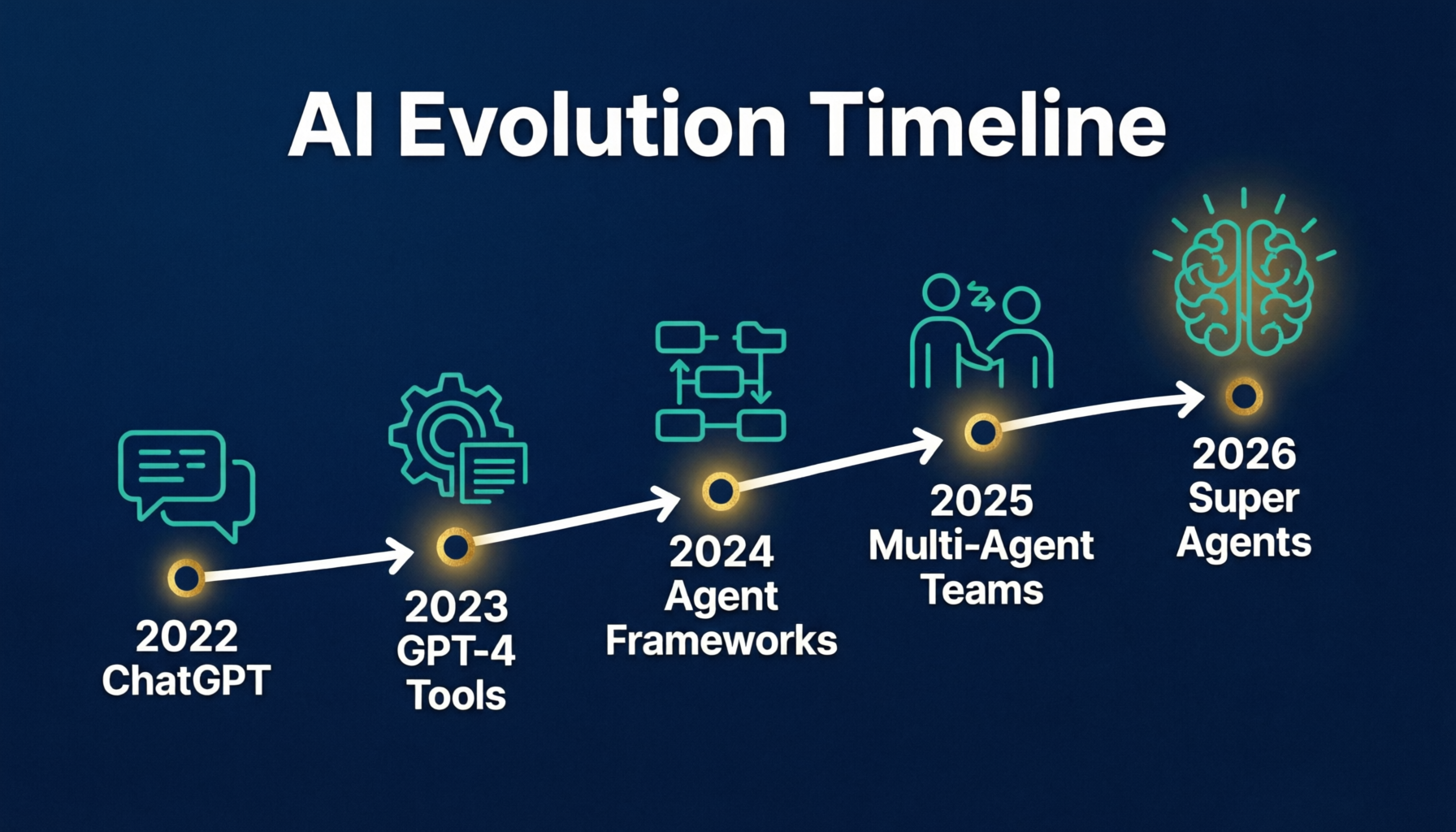

The path to super agents has moved through clear stages over the past three years:

Stage 1: Prompt Engineering (2023-2024) Businesses discovered ChatGPT and learned that better prompts produce better outputs. Custom instructions and saved prompts became common. This was valuable but limited to single-turn interactions.

Stage 2: Custom GPTs and Assistants (2024-2025) OpenAI's custom GPTs let users package prompts, knowledge files, and tool access into shareable agents. Anthropic introduced Claude projects. Google launched Gemini Gems. These were more capable but still constrained within their platform walls.

Stage 3: Agent Frameworks (2025) The OpenAI Agents SDK, Anthropic's Claude Code, LangChain, CrewAI, and similar frameworks gave developers the tools to build agents that could use multiple tools, follow multi-step plans, and maintain context across complex tasks.

Stage 4: Sandbox Agents and Super Agents (2026) OpenAI's April 2026 Agents SDK update introduced native sandbox execution. Agents can now spin up isolated code environments, run commands, inspect files, edit code, and work on long-horizon tasks safely. This is the super agent era.

What Are AI Agent Sandboxes and Why Do They Matter?

An AI agent sandbox is an isolated execution environment where an agent can write, run, and test code without risking the host system. Think of it as giving your AI agent its own computer to work on.

OpenAI's April 2026 update to the Agents SDK brought sandbox support to the mainstream. The update includes a model-native harness that lets agents work with files and tools directly, native sandbox execution compatible with Docker and other providers, and support for long-horizon tasks that require sustained work across many steps.

Before sandboxes, agents were limited to API calls and text manipulation. With sandboxes, they can install software, run data analysis pipelines, build and test applications, process files locally, and iterate on solutions autonomously.

For Australian businesses, this means an agent can handle tasks that previously required a developer. A super agent could ingest your CRM export, clean and analyse the data in its sandbox, generate a report with visualisations, and deliver the finished product without any human touching code.

What Is the Difference Between Workspace Agents and Super Agents?

In April 2026, OpenAI launched Workspace Agents inside ChatGPT, available on Business, Enterprise, Edu, and Teachers plans. These are essentially evolved custom GPTs: they can connect to tools like Slack and Salesforce, automate recurring tasks, and work across team contexts. They are free until May 6, 2026, then move to credit-based pricing.

Workspace Agents are convenient. They give consistency and integrate with your existing ChatGPT workflow. But they come with a ceiling. You operate within OpenAI's boundaries. You cannot optimise beyond what the platform allows. You cannot choose your own model, swap in a cheaper open-source option for routine tasks, or customise the infrastructure.

Super agents built with the Agents SDK face no such limits. They can use any model, run any code, connect to any service, and operate on your own infrastructure. That freedom is the difference between renting an office and owning the building.

The practical takeaway for Australian businesses: start with Workspace Agents for simple team automation, but plan your migration path to super agents for anything mission-critical or cost-sensitive.

How Can Businesses Manage the Cost of Frontier AI Models?

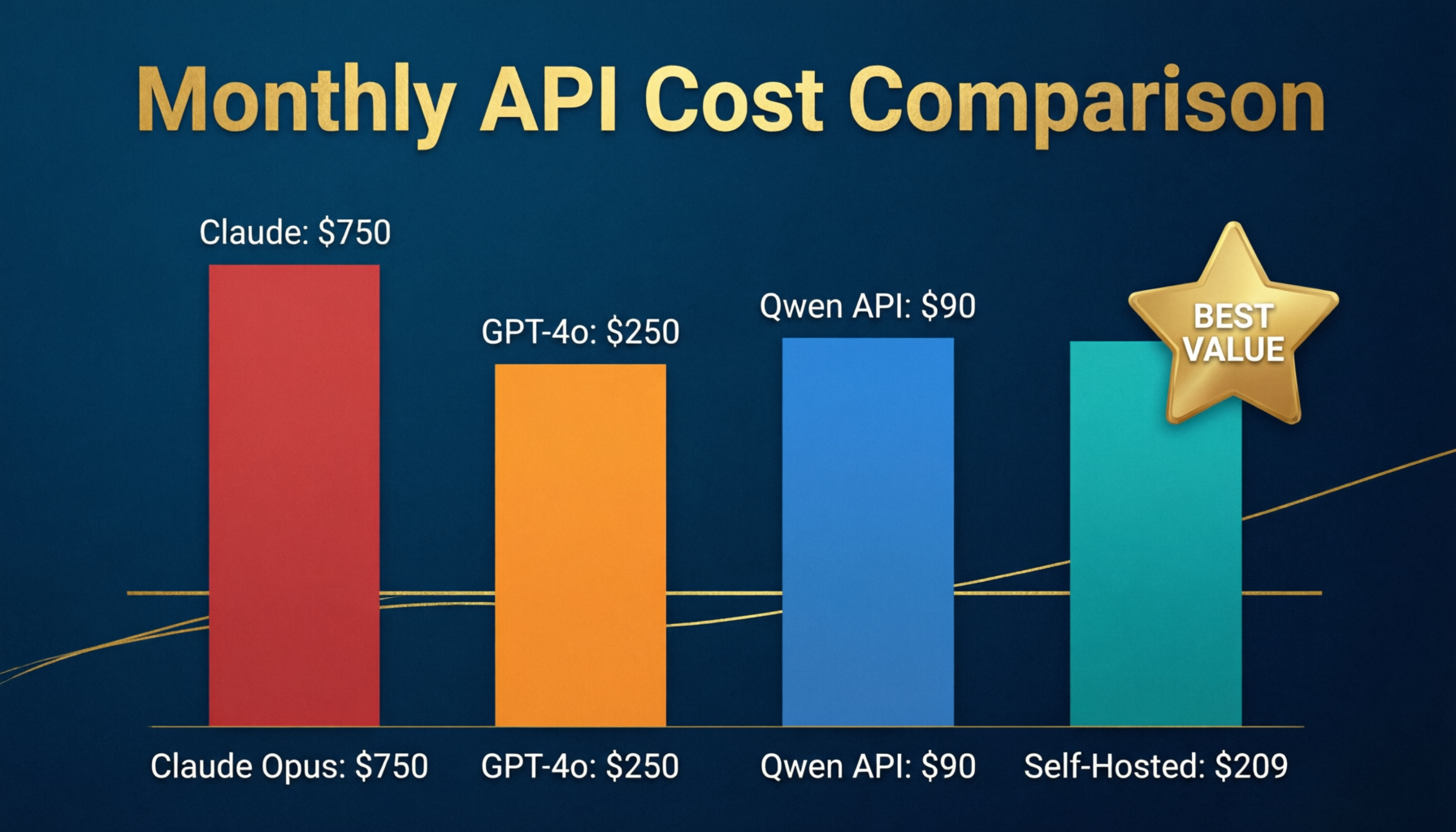

The economics of AI agents is becoming a serious boardroom conversation, and for good reason. In April 2026, Uber's CTO revealed that the company had blown through its entire 2026 AI budget just months into the year, primarily driven by spending on Anthropic's Claude models for development work. This despite Uber investing approximately $3.4 billion in research and development.

Uber is not a small company. If frontier AI costs are unsustainable for a $150 billion tech giant, they are certainly a concern for Australian businesses with more modest budgets.

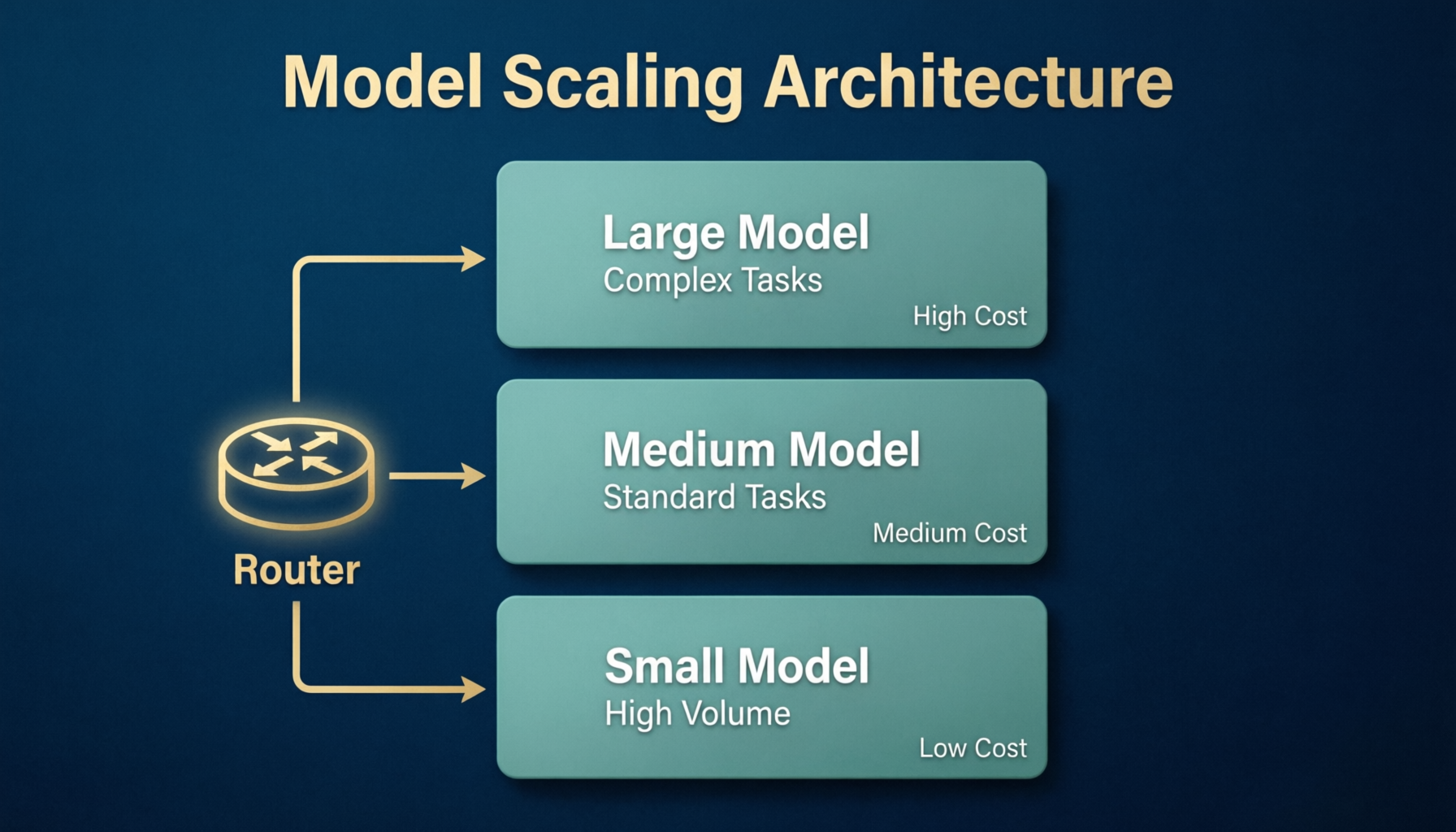

The solution that is emerging is a multi-model architecture. Use expensive frontier models like GPT-4o, Claude Opus, or Gemini Ultra for the thinking work: strategy, complex reasoning, creative problem-solving. Then hand off execution to cheaper, faster models, including open-source options running on your own infrastructure.

This tiered approach can reduce AI costs by 60-80% compared to running everything on frontier models. Open-source models like Llama, Mistral, and Qwen are now capable enough for most execution tasks: data formatting, file processing, routine customer interactions, report generation.

For an Australian business spending $2,000-5,000 per month on AI APIs, this architecture could cut that to $500-1,500 while maintaining the same quality of high-level output.

What Does a Multi-Model Agent Architecture Look Like?

The most sustainable agent architecture in 2026 uses multiple models in a coordinated hierarchy:

The Orchestrator (Frontier Model) A GPT-4o or Claude Opus instance handles planning, reasoning, and decision-making. It breaks down complex tasks into steps, decides which tools to use, and reviews outputs for quality. This is your expensive, capable brain.

The Workers (Efficient Models) Smaller, faster models handle execution. They process data, format outputs, run repetitive tasks, and execute the plan. These can be GPT-4o-mini, Claude Haiku, or open-source models running locally.

The Sandbox (Execution Environment) Code runs in isolated containers. The agent writes scripts, tests them, and iterates. This is where the actual work happens without risking your production systems.

The Memory (Context Store) Long-term memory systems store conversation history, learned preferences, and accumulated knowledge. This prevents the agent from starting fresh each time and reduces token usage on re-establishing context.

This architecture mirrors how human organisations work. You have senior people making strategic decisions and junior people executing. No company puts its CEO on data entry. The same principle applies to AI.

Which AI Agent Platforms Should Australian Businesses Watch?

The agent landscape in 2026 has several key players, each with different strengths:

OpenAI Agents SDK + Codex The April 2026 sandbox update makes this the most accessible path to super agents. Strong Python support, excellent documentation, and the weight of OpenAI's ecosystem behind it. Workspace Agents offer a gentler on-ramp for less technical teams.

Anthropic Claude Code Anthropic's coding agent runs in terminals and IDEs. It is particularly strong at software development tasks and has attracted major enterprise clients, including Uber. However, the cost sustainability question looms large.

Google Gemini Agents Google's agent framework integrates deeply with Google Cloud and Workspace. Best for businesses already in the Google ecosystem. Less flexible than the OpenAI SDK for custom infrastructure.

Open Source: LangChain, CrewAI, AutoGen These frameworks offer maximum flexibility and no vendor lock-in. They require more engineering effort but give you full control over models, costs, and data privacy. Ideal for businesses with development resources.

Local Models: Llama, Mistral, Qwen Running models on your own hardware is increasingly viable. Australian businesses with data sovereignty requirements or cost concerns should explore this path. A modern GPU server can run capable models for a fraction of cloud API costs.

Why Are Australian Businesses Uniquely Positioned for AI Agents?

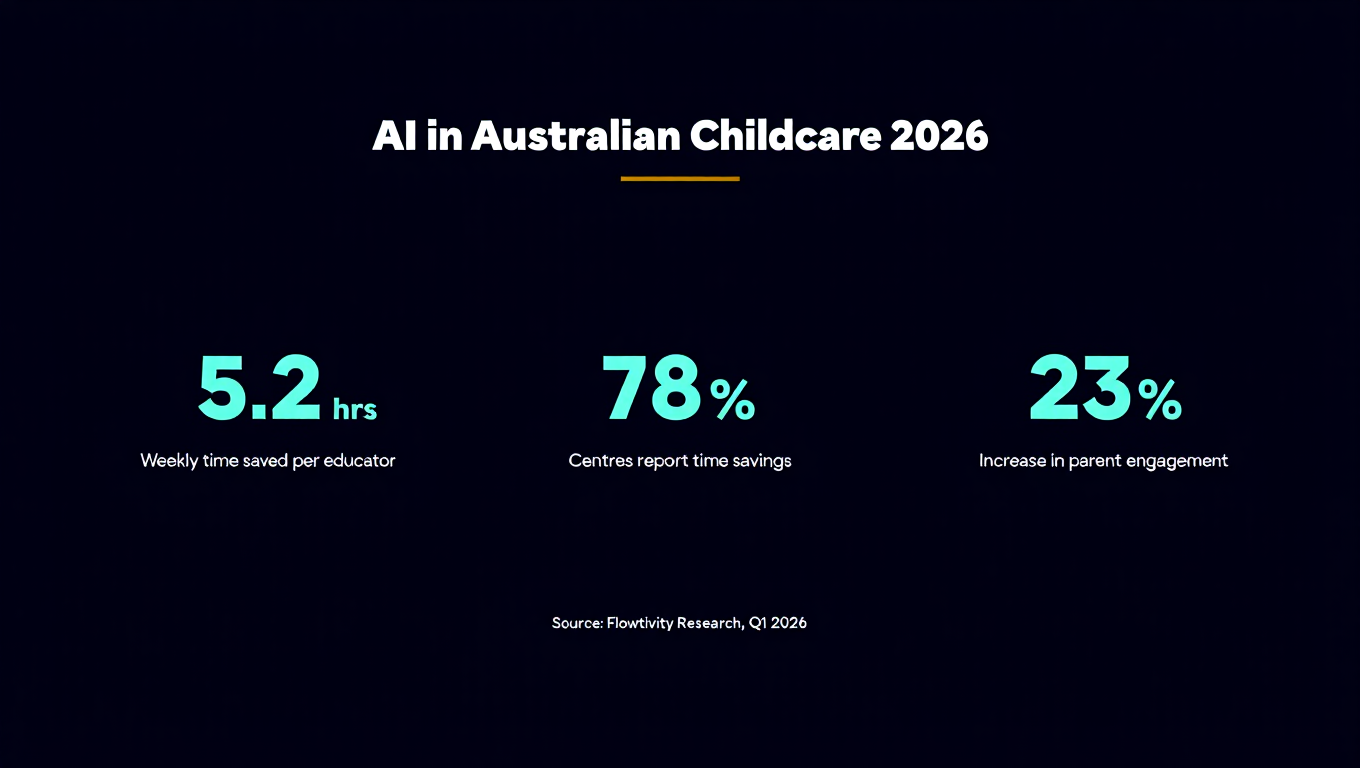

Australia's AI adoption is accelerating. According to the Australian Government's AI Adoption Tracker, between 29% and 37% of Australian SMEs are now using AI tools in some capacity. Professional services lead at 79% adoption, followed by financial services.

Several factors make Australian businesses well positioned for the super agent transition:

- High labour costs drive automation urgency. Australian wages are among the highest globally. Any AI system that reduces manual work delivers outsized ROI compared to lower-cost labour markets.

- Data sovereignty requirements create on-premise demand. Many Australian businesses, especially in healthcare, finance, and government, face strict data residency rules. Multi-model architectures with local execution directly address these requirements.

- Geographic isolation limits talent access. Australia cannot easily import AI talent at scale. Super agents that augment existing teams are more practical than trying to hire scarce specialists.

- Conservative business culture favours proven approaches. Australian businesses tend to prefer demonstrated results over hype. The prototype-first approach to AI adoption, showing working solutions before asking for investment, aligns perfectly with this mindset.

The opportunity is particularly strong for growing businesses with 11-200 employees. These organisations are large enough to benefit from automation but small enough that AI agents can transform entire workflows rather than just optimise edges.

How Do You Upgrade From ChatGPT Prompts to a Proper AI Agent?

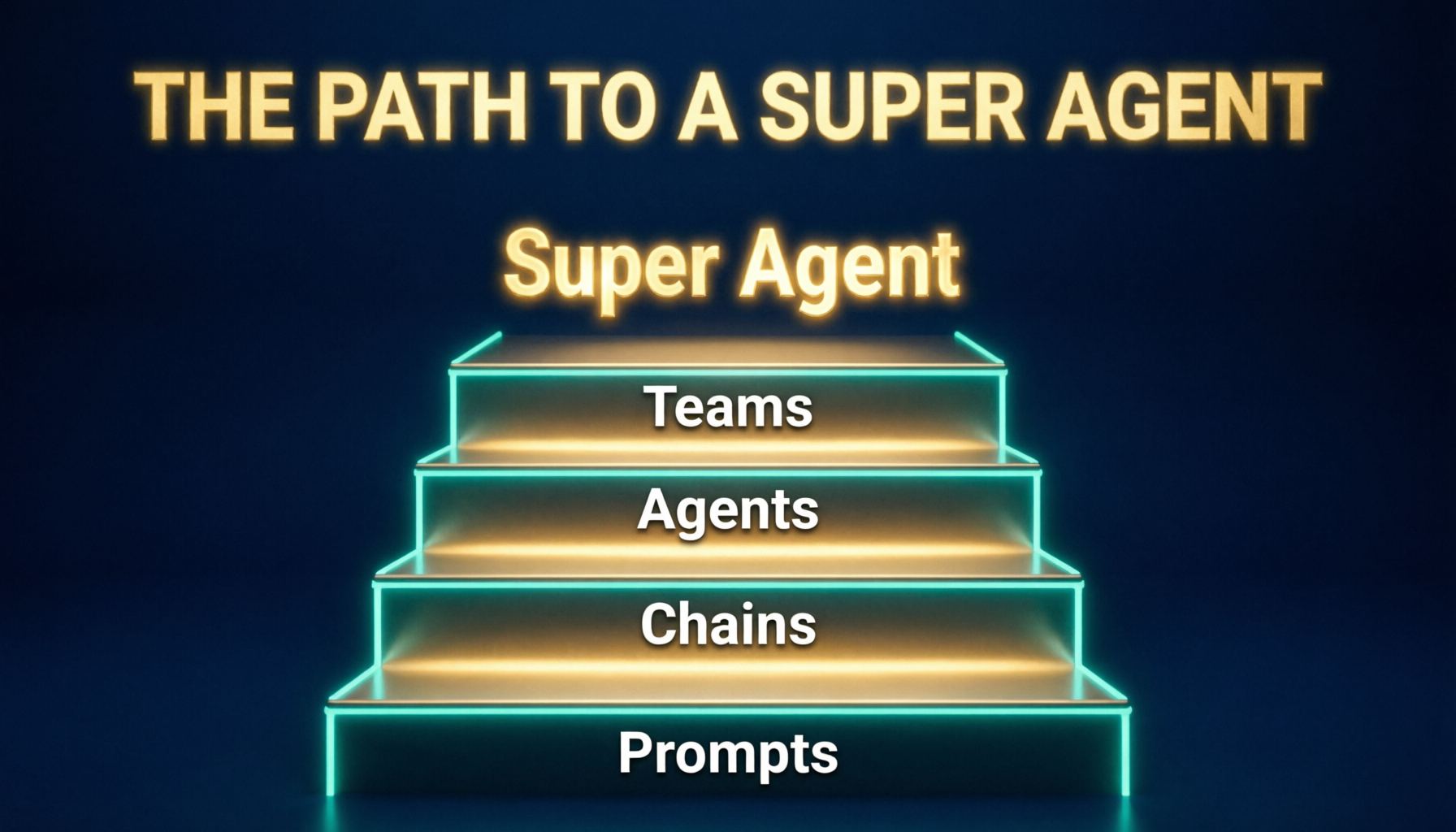

The journey from prompt to super agent follows a clear progression:

Step 1: Audit Your Current AI Usage Document every place your team uses ChatGPT or similar tools. What prompts do they reuse? What tasks are repetitive? Where do they copy-paste results into other systems? These patterns reveal your agent opportunities.

Step 2: Identify the High-Value Workflow Pick one workflow that is repetitive, follows clear rules, and involves multiple steps. Good candidates include lead qualification and follow-up, report generation from multiple data sources, customer onboarding sequences, and document processing and extraction.

Step 3: Build a Custom GPT or Workspace Agent First Before investing in custom development, prototype with Workspace Agents or Claude Projects. This validates the workflow and reveals edge cases without engineering cost.

Step 4: Move to the Agents SDK for Production Once the workflow is proven, rebuild it using the Agents SDK with sandbox execution. This gives you control over costs, model selection, and infrastructure. You can run it on your own terms.

Step 5: Layer in Multi-Model Architecture As usage scales, introduce the tiered model approach. Use frontier models for planning, efficient models for execution, and local models for data-sensitive tasks.

Step 6: Connect Your Ecosystem Integrate with your existing tools: CRM, email, calendar, project management, accounting software. The super agent's value multiplies when it can act across your entire tool stack.

What Are the Risks of Building AI Agents on Closed Platforms?

Vendor lock-in is the biggest risk. When your entire agent infrastructure runs on one provider's platform, you are subject to their pricing changes, feature decisions, and availability. OpenAI's Workspace Agents are convenient today, but you have no guarantee that the pricing, features, or even the product will remain favourable.

Data privacy is another concern. Closed platforms process your data on their infrastructure. For Australian businesses handling customer information, financial records, or health data, this may conflict with privacy obligations under the Privacy Act 1988 and the Australian Privacy Principles.

Capability ceilings are real. Closed platforms optimise for the average user. If your agent needs custom behaviour, non-standard integrations, or unusual model configurations, you will hit walls. The Agents SDK and open-source frameworks exist precisely because no single provider can serve every use case.

The recommended approach: use closed platforms for experimentation and prototyping, but build production systems on open infrastructure you control.

Key Takeaways

- Super agents are AI systems with sandbox execution capabilities that can write, run, and iterate on code autonomously

- The evolution runs from prompts to custom GPTs to agent frameworks to sandbox-equipped super agents

- OpenAI's April 2026 Agents SDK update brought sandbox execution to the mainstream, making super agents accessible to any development team

- Workspace Agents are convenient for team automation but come with vendor lock-in and capability ceilings

- Frontier model costs are unsustainable at scale, as Uber's experience demonstrates

- Multi-model architecture using frontier models for thinking and efficient models for execution can reduce costs by 60-80%

- Australian businesses are well positioned due to high labour costs, data sovereignty needs, and growing adoption rates

- Start with one workflow, prototype on a closed platform, then migrate to the Agents SDK for production

Frequently Asked Questions

What makes an AI agent a "super agent"? A super agent can spin up its own sandbox environments, write and execute code, manage multi-step workflows spanning hours or days, connect to external tools and APIs, and recover from errors autonomously. The sandbox capability is the key differentiator. Without it, an agent is limited to text manipulation and API calls.

How much does it cost to build a custom AI agent? For Australian businesses, expect $3,000-15,000 for initial development of a production-grade agent, depending on complexity. Monthly running costs range from $200-2,000 depending on model usage and volume. Multi-model architectures can reduce ongoing costs significantly compared to running everything on frontier models.

Can small businesses use AI agents effectively? Yes. Businesses with 11-200 employees are arguably the sweet spot. They have enough repetitive processes to benefit from automation, but not enough staff to justify hiring dedicated AI engineers. Pre-built agent frameworks and Workspace Agents make the entry barrier low. A single well-designed agent handling lead follow-up or report generation can save 10-20 hours per week.

Is my data safe with AI agents? It depends on your architecture. Closed platforms like ChatGPT Workspace Agents process data on the provider's infrastructure. For sensitive data, build agents using the Agents SDK or open-source frameworks on your own infrastructure. Australian businesses subject to the Privacy Act should assess data residency requirements before choosing a platform.

What is the difference between the OpenAI Agents SDK and Workspace Agents? Workspace Agents are a product within ChatGPT designed for non-technical business users. They connect to tools like Slack and Salesforce through a simple interface. The Agents SDK is a developer framework for building custom agents with full control over models, infrastructure, sandbox execution, and integrations. Workspace Agents are the easy on-ramp; the Agents SDK is the production highway.

How do I start building a super agent? Start by documenting your team's repetitive AI usage patterns. Pick one high-value workflow. Prototype it with Workspace Agents or a custom GPT. Once validated, rebuild using the OpenAI Agents SDK with sandbox execution. Layer in multi-model architecture as usage scales. The key is starting small, proving value, then expanding.