Last Updated: May 2026

The biggest myth in AI right now is that the model is what matters most. It does not. A decent model with a great harness consistently beats a great model with a bad harness. The discipline of building, tightening, and maintaining that scaffolding has a name: harness engineering. Here is what it is, why it matters, and how Australian businesses can use it to build AI agents that actually work.

What Is Harness Engineering?

Harness engineering is the discipline of designing and maintaining everything wrapped around an AI model that turns it from a text generator into a working agent. The term was coined by Vivek Trivedy and popularised by Google engineering leader Addy Osmani in May 2026. The core equation is simple:

Agent = Model + Harness. If you are not the model, you are the harness.

A raw model is not an agent. It only becomes one when a harness provides it with state, tool execution, feedback loops, memory, and enforceable constraints. The harness includes system prompts, tool definitions, context management policies, sandboxed execution environments, subagent orchestration, hooks for deterministic checks, and observability layers that track every decision the agent makes.

The critical insight: the model is merely one input into a running agent. The rest is the scaffolding. And increasingly, the most interesting engineering work is not in selecting the model, but in designing that scaffolding.

For Australian businesses evaluating AI solutions, this reframes the entire buying decision. The question is not "which model does this use?" It is "what harness wraps around it?"

Why a Great Harness Beats a Great Model

Performance benchmarks consistently show that a leading model running inside an off-the-shelf framework scores drastically lower than the exact same model running in a custom, highly-tuned harness. Moving a model into an environment with better codebase tools, tighter prompts, and sharper feedback loops can unlock capabilities the original setup left behind.

The gap between what today's models can theoretically do and what you actually see them doing is largely a harness gap.

Consider the practical difference:

- Great model, bad harness: The agent gets lost in multi-step tasks, ignores conventions, runs destructive commands, hallucinates file paths, and stops early when context fills up

- Decent model, great harness: The agent follows a plan file, respects rules encoded from past failures, runs in a sandbox, self-verifies with tests, and gets intercepted if it tries to exit before completion

The same model. Wildly different outcomes. The harness is the difference.

This is why platforms like Claude Code, Cursor, Codex, Aider, and Cline behave so differently despite often using the same underlying models. They are all harnesses. The model might be identical, but the behaviour you experience is dominated by the harness.

See how agent frameworks compare in our enterprise guide →

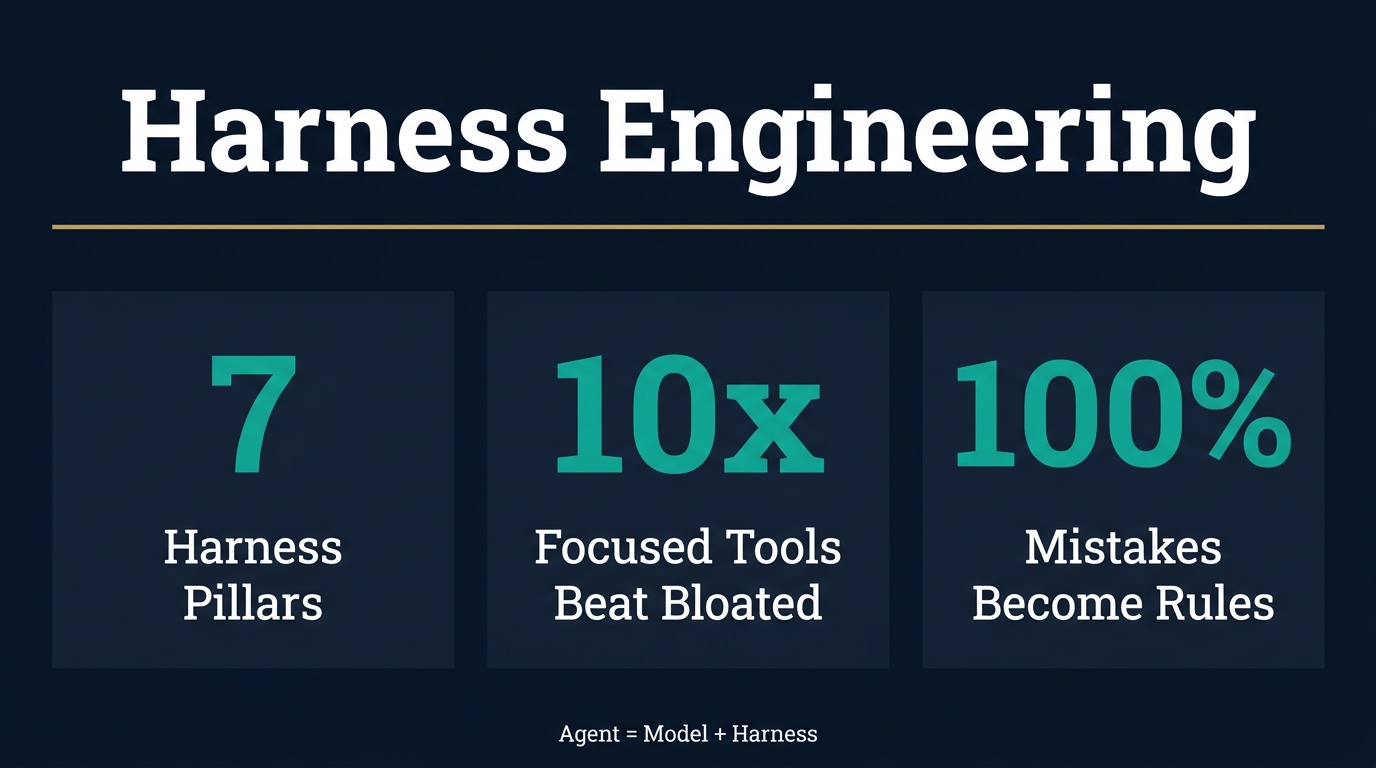

The Seven Pillars of a Harness

Every production-ready agent harness needs seven components. Each has a distinct job. If you cannot name the specific behaviour a component exists to deliver, it should be removed.

1. System Prompts and Rule Files

A flat markdown file at the root of a project (like AGENTS.md or CLAUDE.md) is still the highest-leverage configuration point in any agent setup. But it must be treated like a pilot's checklist, not a style guide. Keep it short. Ensure every rule is earned through a past failure.

The best system prompts do not describe what the agent should do in general terms. They encode specific constraints born from specific mistakes. "Never comment out tests. Delete or fix them." "Always run the test suite before committing." "Never use em dashes in client emails." Each rule exists because the agent once violated it.

2. Tools and Skills

Tools are how the agent interacts with the world. The harness defines which tools exist, what they do, and how the agent discovers them. Ten highly focused tools will always outperform fifty overlapping ones.

This is also where security risks enter. Tool descriptions populate the agent's prompt. Malicious or sloppy external integrations, such as unverified MCP servers, can inject bad instructions into the agent before it even starts working. Every tool in your harness should be audited and purposeful.

3. Filesystem and Version Control

The filesystem is foundational. Models can only operate on what fits in their context window. A filesystem provides a workspace to read data, a place to offload intermediate work, and a surface for multiple agents to coordinate.

Adding Git provides free versioning. The agent can track progress, branch experiments, and roll back errors. For any business deploying agents that modify code, documents, or configurations, Git-backed filesystems are non-negotiable.

4. Sandboxes and Safe Execution

Bash access gives agents general-purpose problem-solving ability, but only if it runs safely. Sandboxes provide isolated environments where agents can run code, inspect files, and verify work without risking the host machine.

A good sandbox ships with strong defaults: pre-installed language runtimes, test CLIs, and headless browsers. This allows the agent to observe its own work and close the self-verification loop. For businesses handling client data, sandboxes prevent agents from accidentally modifying production systems.

5. Memory and Continual Learning

Models have no knowledge beyond their training weights and current context. Harnesses bridge this gap using memory files that inject knowledge into every session. These files accumulate over time, encoding domain expertise, project conventions, and lessons learned.

For real-time information like new library versions, live data, or current events, web search and API tools are baked directly into the harness. The combination of persistent memory and live retrieval makes the agent more capable with every session.

6. Context Management and Compaction

Models degrade in reasoning as their context windows fill up. Harnesses manage this scarcity using three primary techniques:

- Compaction: Intelligently summarising and offloading older context to prevent errors while preserving key information

- Tool-call offloading: Storing massive tool outputs (like 2,000-line logs) in the filesystem while keeping only essential headers and footers in context

- Progressive disclosure: Revealing instructions and tools only when a task explicitly requires them, rather than loading everything at startup

Without context management, agents working on long tasks eventually degrade in quality or crash entirely. This is one of the most technically challenging aspects of harness engineering and the one most teams overlook.

7. Hooks and Enforcement Layers

Hooks bridge the gap between requesting an action and enforcing it. They run at specific lifecycle points: before a tool call, after a file edit, or before a commit. Hooks block destructive commands, enforce auto-formatting to save tokens, and run test suites.

The rule: success is silent, and failures are verbose. If a typecheck passes, the agent hears nothing. If it fails, the error is injected directly back into the loop for self-correction.

Hooks are what turn a helpful assistant into a reliable system. They are the enforcement layer that ensures the agent cannot violate the rules, even when the model wants to.

The Ratchet: Every Mistake Becomes a Rule

The most vital habit in harness engineering is treating agent mistakes as permanent signals, not one-off flukes to retry and forget.

If an agent ships a pull request with a commented-out test that gets merged by accident, that is a signal. The next iteration of the rule file must state: "Never comment out tests. Delete or fix them." The next pre-commit hook should automatically flag skipped tests in the diff. The reviewer subagent must be updated to block commented-out tests.

Every line in a good system prompt should trace back to a specific, historical failure.

Constraints should only be added when you observe a real failure, and removed only when a capable model renders them redundant. Because of this, harness engineering is a discipline rather than a one-size-fits-all framework. The right harness for your organisation is entirely shaped by its unique failure history.

This is the ratchet principle: the harness only tightens, never loosens, unless a model upgrade makes a constraint unnecessary. Over time, this creates a system that becomes more reliable with every mistake it makes.

Working Backwards from Desired Behaviour

The most effective way to design a harness is to start with the desired behaviour and build the component that delivers it.

Examples of working backwards:

Behaviour you want: The agent never ships broken code

Harness component: A pre-commit hook that runs the full test suite and blocks commits on failure

Behaviour you want: The agent follows project-specific conventions

Harness component: An AGENTS.md file at the project root encoding every convention learned from past failures

Behaviour you want: The agent completes long tasks without stopping early

Harness component: A loop interceptor that catches the agent's attempt to exit and forces continuation against a completion goal

Behaviour you want: The agent does not grade its own work positively

Harness component: Separate generation and evaluation into distinct agents, preventing the inherent bias models have when reviewing their own output

Behaviour you want: The agent handles multi-step work predictably

Harness component: A planning phase that forces decomposition into a step-by-step plan file, with self-verification hooks after each step

Every piece of the harness must have a distinct job. If you cannot name the specific behaviour a component exists to deliver, remove it.

Harness Engineering for Australian Businesses

The Practical Starting Point

You do not need to be a Silicon Valley startup to apply harness engineering. The principles work at any scale. Here is how to start:

Step 1: Pick your harness framework. Do not build orchestration from scratch. Use an existing harness (Claude Code, Cursor, OpenClaw, Codex) that provides the loop, tools, context management, hooks, and sandboxes out of the box.

Step 2: Write your first rule file. Create an AGENTS.md or equivalent at the root of your project. Start with 5-10 rules, each tracing back to a real mistake your team or agent has made. Keep it short. A pilot's checklist, not a style guide.

Step 3: Add one hook. Pick the most common failure mode in your workflow and add a single hook that prevents it. A pre-commit typecheck. A lint rule. A test runner. One hook, one failure mode.

Step 4: Run the agent and observe. Every mistake is a signal. Each time the agent fails, ask: "Can I add a rule, hook, or tool that makes this failure impossible next time?"

Step 5: Tighten the ratchet. Over weeks and months, your harness accumulates constraints born from real failures. The agent becomes more reliable not because the model improved, but because the scaffolding around it got tighter.

What This Looks Like for Growing Businesses

For a construction company using AI to process site reports, the harness might include:

- A rule file encoding report format conventions and mandatory fields

- A hook that validates every generated report against a schema before submission

- A sandbox that isolates report processing from the production database

- A memory file accumulating site-specific terminology and project codes over time

For an allied health practice using AI to draft treatment plans, the harness might include:

- A rule file encoding clinical documentation standards and privacy requirements

- A hook that flags any generated text containing patient identifiers for human review

- A context management policy that strips sensitive data from long-term memory

- A split architecture where one agent generates and a separate agent evaluates

For a professional services firm using AI agents for client research and proposal drafting, the harness might include:

- A rule file encoding the firm's writing style, methodology frameworks, and compliance requirements

- A hook that runs every proposal through a quality checklist before client delivery

- Subagent orchestration where one agent researches, one drafts, and one reviews

- A memory file that accumulates client preferences and past feedback over time

Learn how ISO 42001 provides the governance framework for these agent deployments →

Harnesses Do Not Shrink, They Move

As models improve, the need for a harness does not disappear. It shifts.

It is tempting to assume better models make scaffolding obsolete. Recent model upgrades drastically reduced the need for "context-anxiety" mitigations, for example. But as the floor raises, so does the ceiling. Tasks that were previously unreachable are now in play, bringing entirely new failure modes.

Every component in a harness encodes an assumption about what the model cannot do on its own. When the model improves, outdated scaffolding should be removed, and new scaffolding must be built to reach the next horizon.

This is why harness engineering is a continuous discipline, not a one-time setup. The best harnesses evolve with every model upgrade and every new task attempted.

Harness-as-a-Service: Where the Industry Is Going

The industry is shifting from building on LLM APIs (which provide completions) to building on Harness APIs (which provide a runtime). SDKs now offer the loop, tools, context management, hooks, and sandboxes right out of the box.

Instead of building orchestration from scratch, the modern default is to select a harness framework, configure its core pillars, and focus purely on domain-specific prompt and tool design. This is what makes troubleshooting scalable: you are tuning a well-factored configuration surface rather than reinventing the entire agent architecture.

If you look at the top coding agents today, they look more like each other than their underlying models do. The models differ, but the harness patterns are converging. The industry is rapidly identifying the load-bearing scaffolding required to turn generative text into reliable, production-grade work.

Frequently Asked Questions About Harness Engineering

What is harness engineering in AI?

Harness engineering is the discipline of designing and maintaining everything wrapped around an AI model that turns it into a working agent. This includes system prompts, tool definitions, context management, sandboxed execution, subagent orchestration, enforcement hooks, and observability. The core principle: Agent = Model + Harness. The harness matters as much as or more than the model.

Why does the harness matter more than the model?

Performance benchmarks show a leading model in an off-the-shelf framework scores drastically lower than the same model in a custom, tuned harness. The gap between what models can theoretically do and what they actually do is largely a harness gap. A decent model with a great harness beats a great model with a bad harness consistently.

What is the ratchet principle in harness engineering?

The ratchet principle means treating every agent mistake as a permanent signal. When an agent fails, you add a rule, hook, or tool that makes that exact failure impossible next time. Constraints are only added when you observe a real failure and only removed when a model upgrade renders them unnecessary. Every line in a good system prompt traces back to a specific historical failure.

How do I start building an AI agent harness?

Start with three things: pick an existing harness framework (do not build from scratch), write a short rule file encoding 5-10 rules from past failures, and add one enforcement hook targeting your most common failure mode. Then run the agent, observe mistakes, and tighten the ratchet over time.

What tools and components does an AI agent harness need?

A production harness needs seven components: system prompts and rule files, tools and skills, filesystem and version control, sandboxes for safe execution, memory and continual learning, context management and compaction, and hooks for enforcement. Each component must have a distinct job tied to a specific desired behaviour.