Last Updated: May 2026

ISO/IEC 42001 is the world's first international standard for AI management systems. Published in December 2023, it provides a structured framework for organisations developing, providing, or using AI to govern risks, ensure accountability, and build trust. For organisations deploying AI agents as "digital employees," compliance is not optional. It is the difference between responsible innovation and reckless deployment.

What Is ISO 42001 and Why Does It Matter in 2026?

ISO/IEC 42001:2023 is the first globally recognised standard that defines how organisations should establish, implement, maintain, and continually improve an AI Management System (AIMS). Modeled on the same Annex SL structure as ISO 27001 (information security) and ISO 9001 (quality management), it uses the Plan-Do-Check-Act cycle to manage AI risks across the entire lifecycle, from data sourcing and model development through deployment, monitoring, and decommissioning.

The standard matters now more than ever because AI agents are no longer simple chatbots. They are autonomous systems that make decisions, access company data, interact with customers, and execute workflows independently. These "agentic employees" introduce risks that traditional IT governance was never designed to handle: prompt injection attacks, data exfiltration through tool use, biased decision-making at scale, and accountability gaps when an agent causes harm.

For Australian organisations specifically, ISO 42001 provides the governance backbone that supports compliance with the Privacy Act 1988, the upcoming Australian AI Safety Standards, and international frameworks like the EU AI Act. Research shows approximately 60-70% of EU AI Act documentation requirements map directly to ISO 42001 clauses and Annex A controls, making certification a practical shortcut for multi-jurisdictional compliance.

Read our guide to AI regulation in Australia for the full compliance landscape →

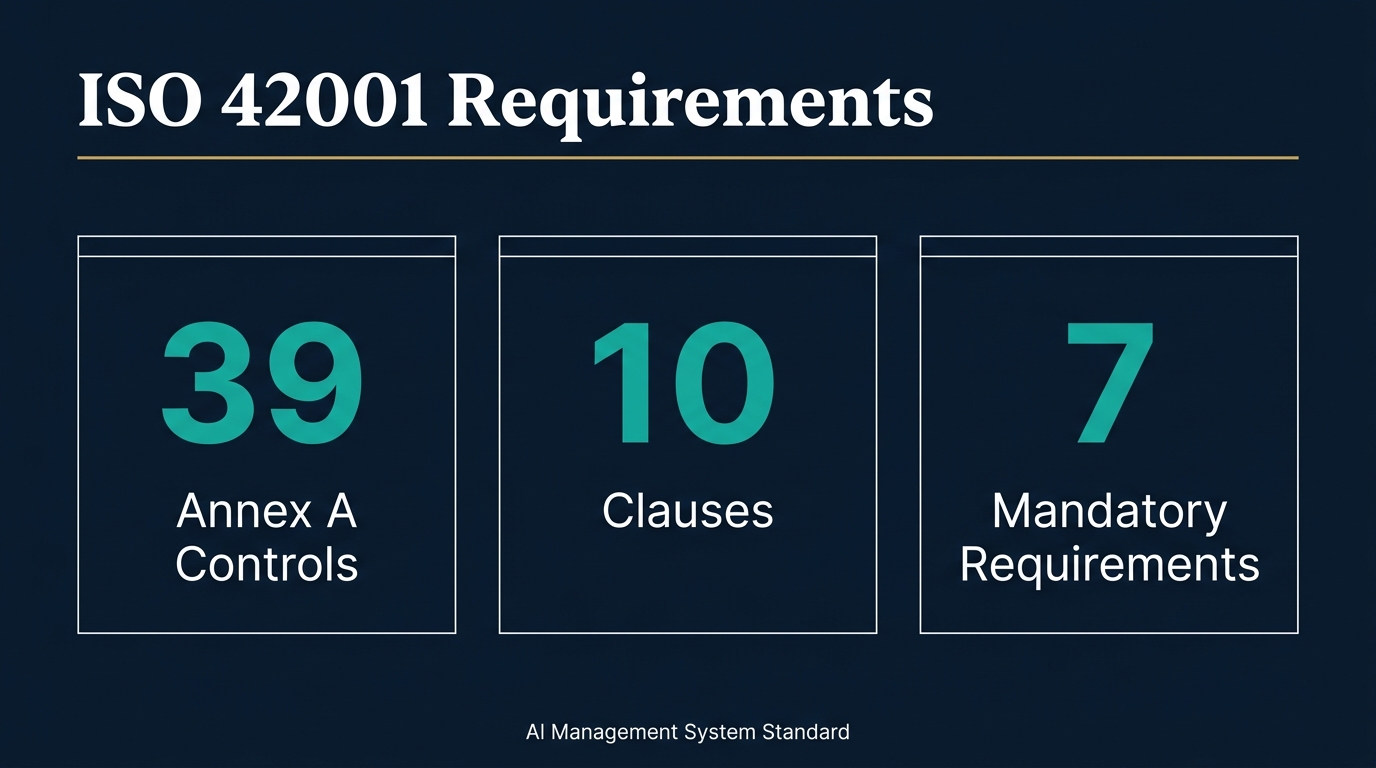

The 10 Clauses of ISO 42001 Explained

ISO 42001 is structured across 10 clauses. Clauses 1-3 provide introductory context and definitions. Clauses 4-10 contain the mandatory, auditable requirements that every organisation must address within their defined AIMS scope.

Clause 4: Context of the Organisation

What it requires: Organisations must analyse both internal and external factors that influence their AI activities, identify all relevant stakeholders (customers, regulators, employees, communities), define the scope of their AIMS, and document how all processes work together.

Key sub-clauses:

- 4.1 Understanding internal and external context, including your role in developing or using AI, legal and ethical factors, and emerging risks

- 4.2 Identifying stakeholder needs and expectations, deciding which ones the AIMS will address

- 4.3 Defining and documenting what parts of your operations the AIMS covers

- 4.4 Creating, running, improving, and documenting the AIMS itself

For agentic employees: This is where you define whether your AI agents are within scope. Most organisations deploying customer-facing agents, automated decision-makers, or data-processing agents should include them in scope. If an agent can affect a customer outcome or access personal data, it needs governance.

Clause 5: Leadership

What it requires: Top management must actively lead the AIMS, not delegate it to IT. This includes aligning AI strategy with business objectives, approving a formal AI Policy, assigning clear roles and responsibilities, and ensuring accountability at the executive level.

Key sub-clauses:

- 5.1 Leadership and commitment: executives must provide resources, promote responsible AI practices, and drive continual improvement

- 5.2 AI Policy: a board-approved document covering responsible AI commitments, compliance obligations, ethical principles, and objectives

- 5.3 Roles, responsibilities and authorities: clear assignment of who manages the AIMS and who reports on performance

For agentic employees: This is critical. When an AI agent makes a decision that harms a customer or breaches privacy, the question "who is responsible?" must have a clear answer. Clause 5 forces organisations to define accountability before deployment, not after something goes wrong. The AI Policy must explicitly address autonomous agent behaviour, human oversight requirements, and escalation procedures.

Clause 6: Planning

What it requires: Organisations must identify AI-specific risks and opportunities, conduct formal risk assessments, develop risk treatment plans, assess the impact of AI systems on people and society, set measurable AI objectives, and plan any changes to the AIMS carefully.

Key sub-clauses:

- 6.1.1 General risk and opportunity management, with documented actions

- 6.1.2 AI risk assessment: a consistent process identifying what could go wrong, severity, likelihood, and which risks need treatment

- 6.1.3 AI risk treatment: choosing controls from Annex A, documenting decisions, getting management approval

- 6.1.4 AI system impact assessment: evaluating how AI affects individuals and society, considering both use and misuse

- 6.2 Setting clear, measurable AI objectives aligned with the AI Policy

- 6.3 Planning changes to the AIMS in a controlled manner

For agentic employees: AI agents represent some of the highest-risk AI deployments because they operate autonomously. Risk assessment must cover prompt injection (where an attacker manipulates agent behaviour), tool abuse (where an agent executes harmful actions through integrated tools), data exfiltration (where an agent leaks sensitive information), and cascading errors (where one agent's mistake propagates through connected systems). Impact assessment must consider what happens when an agent makes a wrong decision about a real person.

Clause 7: Support

What it requires: Organisations must ensure they have adequate resources, competent personnel, organisation-wide awareness, clear communication channels, and properly managed documentation to support the AIMS.

Key sub-clauses:

- 7.1 Resources: people, tools, financial investment, and infrastructure

- 7.2 Competence: everyone involved in AI must have appropriate skills and knowledge, with records kept

- 7.3 Awareness: all personnel must understand the AI Policy and consequences of non-compliance

- 7.4 Communication: defining what, when, how, and with whom AI-related information is shared

- 7.5 Documented information: creating, managing, and protecting all AIMS documentation

For agentic employees: Competence requirements (7.2) mean your team needs AI literacy, not just technical skills. The people building and deploying agents need to understand responsible AI practices, risk management, and the specific threats agents face (prompt injection, adversarial inputs, data poisoning). Awareness requirements (7.3) extend to every employee who interacts with or is affected by AI agents.

Clause 8: Operation

What it requires: This is the operational core. Organisations must plan and control all AI-related processes, implement risk treatments, manage the full AI system lifecycle, govern data properly, and provide clear documentation to users.

Key sub-clauses:

- 8.1 Operational planning and control: managing all processes, monitoring controls, managing changes, and controlling externally provided processes

- 8.2 AI risk assessment: regular reassessment, especially after major changes

- 8.3 AI risk treatment: implementing and reviewing the risk treatment plan

- 8.4 AI system lifecycle processes: managing design, development, training, testing, deployment, monitoring, and retirement

- 8.5 Data management: ensuring data quality, lineage, and privacy throughout the AI lifecycle

- 8.6 AI system documentation and information for users: clear documentation of capabilities, limitations, and intended use

For agentic employees: The lifecycle requirement (8.4) is especially important. Every AI agent should have a documented lifecycle from design through retirement. This includes design specifications (what the agent should and should not do), training data provenance, testing records (including adversarial testing), deployment approvals, ongoing monitoring logs, and decommissioning procedures. Data management (8.5) becomes critical when agents access customer databases, financial records, or health information.

Clause 9: Performance Evaluation

What it requires: Organisations must monitor and measure AI system performance, conduct regular internal audits, and hold management reviews to evaluate AIMS effectiveness.

Key sub-clauses:

- 9.1 Monitoring, measurement, analysis and evaluation of AI systems

- 9.2 Internal audit: regular audits of the AIMS by competent auditors

- 9.3 Management review: top management reviews AIMS performance, suitability, and effectiveness

For agentic employees: Monitoring (9.1) means tracking agent performance in real-time: accuracy rates, error frequencies, escalation rates, compliance with guardrails, and user satisfaction. You need automated monitoring that detects when an agent drifts from its intended behaviour. Internal audits (9.2) should specifically review agent decision logs, testing adequacy, and incident response effectiveness.

Clause 10: Improvement

What it requires: Organisations must address nonconformities through corrective action and drive continual improvement of the AIMS.

Key sub-clauses:

- 10.1 General improvement obligations

- 10.2 Nonconformity and corrective action: identifying problems, investigating root causes, taking corrective action, and evaluating effectiveness

- 10.3 Continual improvement: ongoing enhancement of the AIMS

For agentic employees: When an agent causes an incident (wrong decision, data leak, customer complaint), Clause 10 requires root cause analysis and corrective action. This creates a feedback loop where every agent failure improves the system. Over time, this builds an incident knowledge base that makes your agentic workforce more reliable.

Annex A: The 39 Controls Every Organisation Must Assess

Annex A contains 39 AI-specific controls organised across 9 areas. Organisations do not need to implement all 39. Instead, they select applicable controls based on their risk assessment and document decisions in a Statement of Applicability.

A.2: Policies for AI (2 controls)

Covers the AI Policy itself and responsible AI topics that must be addressed, including fairness, transparency, accountability, human oversight, privacy, safety, and societal impact.

A.3: Internal Organisation (3 controls)

Requires defined roles and responsibilities for AI activities, a mechanism for personnel to report AI concerns without retaliation, and assessment of how organisational changes affect AI governance.

A.4: Resources for AI Systems (5 controls)

Addresses resources (human, technical, financial), competencies (AI/ML skills, responsible AI, risk management, domain expertise), awareness of responsible AI practices, stakeholder consultation on significant AI decisions, and communication about AI system capabilities and limitations.

A.5: Assessing Impacts of AI Systems (3 controls)

Covers AI system risk assessment (technical, organisational, ethical, societal risks), AI system impact assessment (effects on individuals and groups, with mitigation measures), and documentation of all impact assessments.

A.6: AI System Lifecycle (10 controls)

The largest control group, covering responsible design and development, training and testing methodology (including bias, fairness, security, and robustness testing), verification and validation, deployment procedures, operational monitoring, and system retirement.

A.7: Data for AI Systems (5 controls)

Addresses data quality, data lineage and provenance, privacy and data protection, data governance processes, and data management throughout the AI lifecycle.

A.8: Information for Interested Parties (4 controls)

Requires transparency about AI system capabilities, limitations, and intended use. Covers documentation provided to users, regulators, and other stakeholders.

A.9: Use of AI Systems (4 controls)

Governs responsible use of AI within the organisation, including monitoring deployed systems, ensuring human oversight, managing user interactions, and handling misuse or unintended use.

A.10: Third-Party and Customer Relationships (3 controls)

Addresses risks from third-party AI services (like OpenAI, Anthropic, Google APIs), vendor due diligence, customer communication about AI use, and managing AI supply chain risks.

How ISO 42001 Directly Impacts Organisations Building Agentic Employees

The Agent Governance Gap

Most organisations deploying AI agents in 2026 have a governance gap. They have IT security policies, data protection procedures, and perhaps a responsible AI statement. But they lack specific governance for autonomous AI systems that make independent decisions. ISO 42001 fills this gap by requiring formal structures for every aspect of agentic AI deployment.

Human Oversight Is Not Optional

Clause 5 (Leadership) and Annex A.9 (Use of AI Systems) both require human oversight of AI systems. For agentic employees, this means every agent deployment needs:

- Clear escalation paths when the agent encounters situations beyond its capability

- Human review points for high-stakes decisions (financial, health, legal)

- Override mechanisms that allow humans to intervene in real-time

- Audit trails that record every decision an agent makes

Data Access Must Be Governed

Annex A.7 requires data governance throughout the AI lifecycle. When an AI agent can query customer databases, access financial records, or process health information, every data access point must be documented, justified, and monitored. This is particularly important under Australian Privacy Act requirements, where organisations must demonstrate they have taken reasonable steps to protect personal information.

Third-Party API Risk Is Real

Annex A.10 addresses third-party relationships. If your agents call OpenAI, Anthropic, Google, or any external API, you have supply chain risk. ISO 42001 requires vendor due diligence, contractual clarity about data handling, and contingency plans for when third-party services change, fail, or breach. This control alone makes ISO 42001 essential for any organisation using cloud-based AI models.

The Lifecycle Imperative

Annex A.6 requires managing AI systems through their entire lifecycle. For agentic employees, this means:

- Design: Document what the agent should and should not do, with explicit boundaries

- Training: Record training data provenance, testing methodology, and bias assessments

- Deployment: Require formal approval before any agent goes live

- Monitoring: Track agent performance, error rates, and compliance with guardrails

- Retirement: Plan for decommissioning, including data cleanup and handover

The Practical Implementation Path for Australian Organisations

Phase 1: Foundation (Weeks 1-4)

- Conduct a gap analysis against ISO 42001 clauses 4-6

- Draft your AI Policy covering responsible AI principles, agent governance, and human oversight

- Define AIMS scope: which AI systems, agents, and processes are included

- Identify and engage stakeholders (executives, legal, IT, operations, affected employees)

Phase 2: Build (Weeks 5-12)

- Develop risk assessment methodology for AI agents

- Complete initial AI risk assessment and impact assessment

- Select applicable Annex A controls and create Statement of Applicability

- Implement selected controls with documented evidence

- Establish monitoring and measurement framework

Phase 3: Operate and Evaluate (Weeks 13-20)

- Deploy operational controls (Clause 8)

- Conduct first internal audit (Clause 9.2)

- Hold first management review (Clause 9.3)

- Address any nonconformities through corrective action (Clause 10)

Phase 4: Certify (Weeks 21-24)

- Engage an accredited ISO 42001 certification body

- Complete Stage 1 documentation review

- Complete Stage 2 on-site audit

- Achieve certification

Budget Indication for Australian SMBs (11-200 employees)

- Internal effort: 200-400 hours over 6 months

- External consulting: $15,000-$40,000 AUD depending on complexity

- Certification audit: $10,000-$25,000 AUD

- Ongoing maintenance: $5,000-$10,000 AUD per year

- Total first year: $30,000-$75,000 AUD

ISO 42001 vs Other AI Governance Frameworks

How does ISO 42001 relate to NIST AI RMF? ISO 42001 is a certifiable management system standard with mandatory requirements and third-party auditing. NIST AI Risk Management Framework is a voluntary guideline with no certification pathway. Many Australian organisations implement both: NIST for risk methodology and ISO 42001 for the auditable management system.

How does ISO 42001 relate to the EU AI Act? Approximately 60-70% of EU AI Act documentation requirements map directly to ISO 42001 clauses. ISO 42001 certification demonstrates compliance with many Article 9 (risk management), Article 10 (data governance), Article 11 (technical documentation), and Article 14 (human oversight) requirements. It is not a complete substitute but significantly reduces compliance effort.

How does ISO 42001 relate to the Australian Privacy Act? The Privacy Act requires organisations to take reasonable steps to protect personal information. ISO 42001 provides a structured framework for demonstrating those reasonable steps when AI systems process personal data. For organisations subject to the Notifiable Data Breaches scheme, ISO 42001's incident response requirements align with breach notification obligations.

Frequently Asked Questions About ISO 42001

What is the difference between ISO 27001 and ISO 42001?

ISO 27001 governs information security management systems. ISO 42001 governs AI management systems. They share the same Annex SL structure and many organisations implement both together. ISO 27001 protects your data. ISO 42001 governs how your AI systems use that data and make decisions.

Is ISO 42001 certification mandatory in Australia?

No. ISO 42001 is voluntary in Australia. However, it is increasingly expected by enterprise buyers, government agencies, and regulated industries. The Australian government's interim AI framework references ISO 42001 as a best-practice standard. For organisations handling sensitive data or deploying autonomous agents, certification provides a defensible governance position.

How long does ISO 42001 certification take?

Most organisations achieve certification in 4-6 months. Small organisations with straightforward AI deployments can do it faster. Large enterprises with complex agent ecosystems may take 9-12 months. The timeline depends on existing governance maturity, AI system complexity, and resource commitment.

What does an ISO 42001 audit involve?

The audit has two stages. Stage 1 is a documentation review where the auditor assesses your AIMS documentation, policies, risk assessments, and Statement of Applicability. Stage 2 is an on-site (or remote) audit where the auditor interviews personnel, examines evidence, reviews agent decision logs, and verifies that controls are operating effectively. Certification is valid for three years with annual surveillance audits.

Can small businesses implement ISO 42001?

Yes. The standard is designed to be scalable. A small business with one or two AI agents can implement ISO 42001 with a much smaller scope than a large enterprise. The key is defining your AIMS scope appropriately and selecting only the Annex A controls that are relevant to your AI activities. The cost and effort scale with complexity, not with organisation size.