Last Updated: May 2026

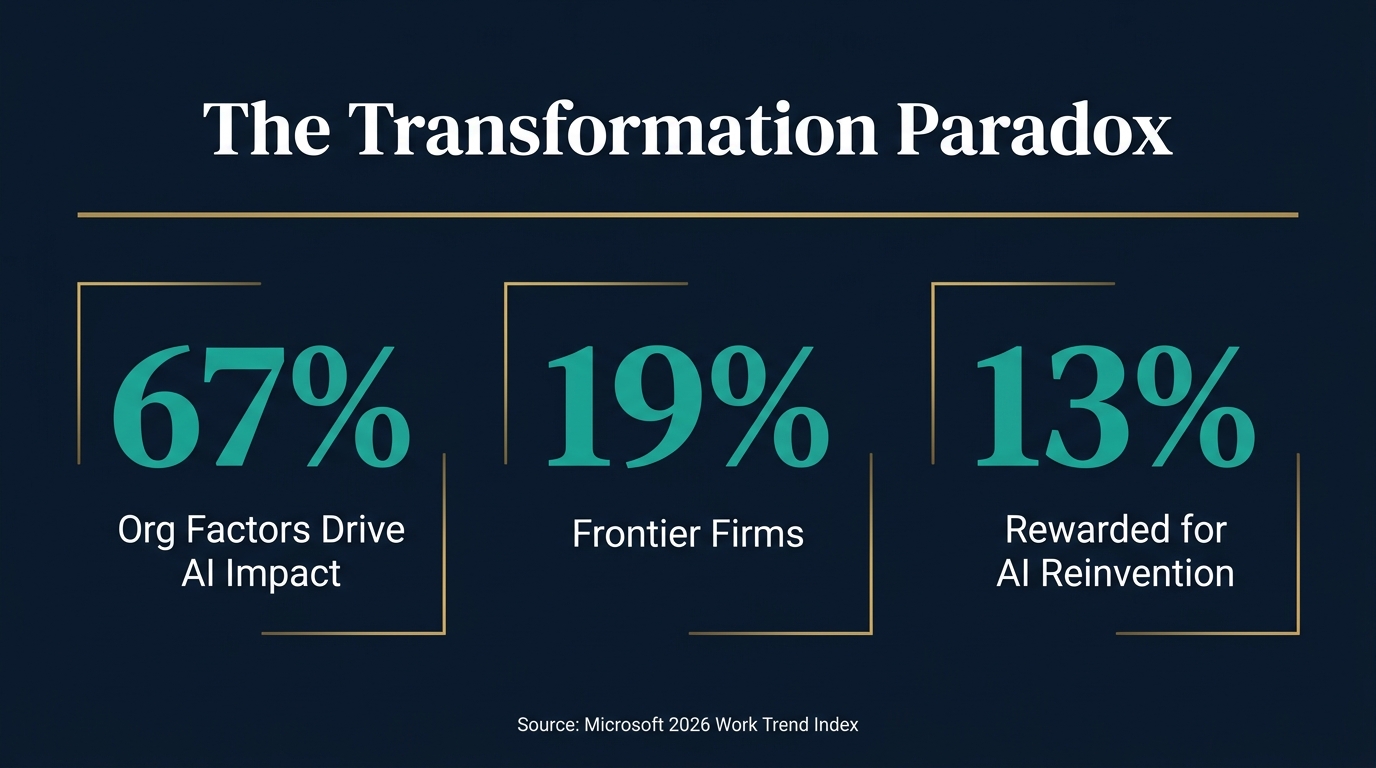

Microsoft's 2026 Work Trend Index surveyed 20,000 workers across 10 countries and found a painful truth: your people are ready for AI, but your organisation is not. They call it the Transformation Paradox, and it explains why most enterprise AI deployments are failing to show ROI even as individual workers report transformation. Here is what the data actually says, what it means for Australian businesses, and what you should do about it.

What Is the Transformation Paradox?

The Transformation Paradox is a term coined by Microsoft in their 2026 Work Trend Index, based on analysis of trillions of anonymised Microsoft 365 productivity signals and a survey of 20,000 AI users across 10 countries. The paradox is simple: the same forces accelerating individual AI adoption are holding back its institutional payoff.

58% of AI users say they are producing work they could not have done a year ago. Among the most advanced users, that rises to 80%. Yet only 1 in 4 say their leadership is aligned on what AI is for, and only 13% say they are rewarded for redesigning their work with AI, even when results do not immediately follow.

The data reveals a system-level breakdown. Employees are moving faster than the organisations around them. The constraint is not skill or willingness. It is culture, management practices, metrics, and incentives that continue to reinforce the old way of working.

Read our guide to building AI agent managers for your business →

The Five Types of AI Users in Your Organisation

Microsoft mapped survey respondents across two dimensions: individual AI capability (how confidently they use and direct AI) and organisational readiness (culture, management support, clear guidelines). The results reveal five distinct groups:

Frontier (19%) — The sweet spot. Both individual capability and organisational readiness are high. These people and their companies are aligned and reinforcing each other. They represent the future state every organisation should target.

Blocked Agency (10%) — Individuals have built strong AI skills but lack the systems to apply them. They are frustrated, capable, and likely to leave for organisations that will let them use what they know.

Unclaimed Capacity (5%) — The organisation is ready (culture, tools, support) but employees have not caught up. These are missed opportunities sitting inside your company.

Emergent / Messy Middle (50%) — The largest group. Both individual practice and organisational conditions are still forming. This is where the most upside lives if you get the system right.

Stalled (16%) — Low capability, limited support. These teams are not participating in the AI transformation at all.

The critical insight: 81% of your workforce is not operating at full AI potential. Not because they cannot, but because the system around them will not let them.

Organisational Factors Matter 2x More Than Individual Effort

This is the most important finding in the entire report, and most leaders are missing it.

Microsoft tested a broad set of organisational, individual, and demographic factors against self-reported AI impact. The result: organisational factors account for 67% of reported AI impact, compared to 32% for individual factors. Culture, manager support, and talent practices matter twice as much as individual mindset and behaviour.

What this means in practice:

- A culture that treats AI as a strategic advantage and encourages experimentation drives more impact than hiring "AI-native" employees into a rigid environment

- Managers who model AI use produce a 17-point lift in reported AI value, a 22-point lift in critical thinking about AI use, and a 30-point lift in trust in agentic AI

- Psychological safety around experimentation makes employees 1.4x more likely to be high-frequency users of agentic AI

- Talent practices that build skills and create space to apply them outperform hiring strategies that assume talent alone delivers results

For Australian businesses investing in AI training and tools, this finding should reframe your entire approach. The question is not "do our people have the right skills?" It is "is our organisation built to unlock them?"

What Frontier Firms Do Differently

Microsoft identifies "Frontier Firms" as organisations where AI absorption, not just adoption, is happening. They are pulling ahead fast. Here is what their data shows Frontier professionals do differently:

- 85% say their manager openly uses AI (vs 64% for non-Frontier)

- 83% say their manager sets quality standards for AI work (vs 57%)

- 84% say their manager creates space for experimentation (vs 61%)

- 87% say their manager encourages ambitious work redesign (vs 61%)

- 2x more likely to be rewarded for reinvention of work with AI regardless of outcome (26% vs 11%)

- 63% brainstorm and refine business processes together to identify AI opportunities (vs 32%)

- 61% share AI tips, new agents, learnings, and mistakes openly (vs 36%)

- 54% discuss quality standards for AI-assisted work (vs 29%)

The pattern is clear: Frontier Firms treat AI as a team sport, not an individual hack. They create shared standards, reward experimentation, and build systems that capture and compound learnings.

The "Software Engineer Is Dead" Moment

Boris Cherny, the creator of Claude Code at Anthropic, has said publicly that by the end of 2026, the job title "software engineer" will start disappearing, replaced by "builder." His argument is that writing code is the smaller part of what building software now means. The new bottleneck is not "can we build it?" but "what should we build?"

At Anthropic itself, there is no manually written code anywhere in the codebase. AI tools talk to each other, handle the implementation, and the humans direct the work.

This is not a prediction. It is a description of what is already happening inside frontier companies. The question for your organisation is: are you building for the world where "builder" is the job title, or the world where "software engineer" still means writing code line by line?

The Work Trend Index data supports this shift. 49% of all Microsoft 365 Copilot conversations support cognitive work: analysing information, solving problems, evaluating, and thinking creatively. Only 17% is about producing work. AI is not just helping people do things faster. It is expanding who can do high-value work.

The April 2026 Layoffs: AI Efficiency or Convenient Attribution?

In April 2026, 21,490 job cuts were attributed to AI, approximately 26% of the monthly total. Year to date, over 92,000 tech jobs have vanished, with Meta cutting 10% of staff and Microsoft offering voluntary retirement to 7% of workers.

The honest answer is that we do not yet know how many of these cuts are genuine AI displacement versus convenient attribution during a macro environment that was always going to produce layoffs. Stock markets reward AI-driven cost stories. A CEO citing "AI efficiency" gets credit for vision. A CEO citing "we over-hired in 2022" gets credit for nothing.

What the data does tell us:

- Nearly 50% of Q1 2026 tech layoffs were attributed to AI, up significantly from 2025

- The companies cutting the most staff (Meta, Microsoft, Amazon) are also spending the most on AI infrastructure

- The Work Trend Index shows AI is expanding individual capability, not just replacing it

- The Transformation Paradox suggests most organisations lack the systems to turn individual AI capability into organisational value

The real risk is not AI replacing jobs. It is organisations using AI as a cost-cutting narrative while failing to redesign work itself. That produces layoffs without the productivity gains that justify them.

What Australian Businesses Should Actually Do

1. Fix the System, Not the People

Your people are likely more ready than your organisation. Before investing in more AI training, audit your management practices, incentive structures, and cultural norms. Are managers modelling AI use? Are people rewarded for experimenting? Is failure treated as learning or punished as waste?

2. Build Evaluation Infrastructure

As agents take on more execution, the premium on human evaluation rises. Every organisation deploying AI agents needs to answer three questions: Who reviews agent performance? Who has authority to update agent workflows? How does a local win get captured and scaled across the organisation?

See how ISO 42001 provides the governance framework for this →

3. Create Owned Intelligence

The Work Trend Index introduces the concept of "Owned Intelligence": institutional know-how that compounds over time, is unique to your firm, and is hard to replicate. This is your competitive advantage. Frontier Firms capture agent signals (what worked, what failed, where outcomes drifted) and encode them into shared routines.

4. Align Leadership

Only 1 in 4 employees say their leadership is aligned on AI. This is not a communication problem. It is a strategy problem. Leadership alignment means a clear, consistent answer to: What is AI for in our organisation? What does success look like? What are we willing to change to get there?

5. Redesign Metrics and Incentives

If you measure people on the old way of working, they will work the old way. Only 13% of employees are rewarded for reinventing work with AI. The metric that matters is not "how much AI did you use?" It is "what outcomes did you drive that were not possible before?"

6. Target the Messy Middle

The largest group (50%) sits in the emergent zone where both individual practice and organisational conditions are still forming. This is where the most upside lives. A small investment in manager enablement, shared standards, and experimentation space can shift a large portion of this group toward Frontier status.

The Manager Multiplier Effect

Microsoft's separate study of 1,800 workers found that managers are the single biggest lever for AI impact in an organisation. When managers actively model AI use:

- +17 points in reported AI value

- +22 points in critical thinking about AI use

- +30 points in trust in agentic AI

- 1.4x more likely to be high-frequency agentic AI users

This means your AI strategy is only as strong as your middle management's willingness to use AI themselves. Training programs that target individual contributors without enabling their managers will underperform.

The Frontier Professional Profile

Who are the 16% of AI users classified as Frontier Professionals? The data paints a clear picture:

- They use agents for multi-step workflows and build multi-agent systems

- They routinely rethink workflows and identify where agents can augment or automate

- 43% intentionally do some work without AI to keep their skills sharp (vs 30% non-Frontier)

- 53% intentionally pause before starting work to decide what should be done by AI versus human (vs 33%)

- 86% treat AI output as a starting point, not a final answer

- They rank highest in critical thinking and quality control measures

The key insight: Frontier Professionals are not the ones who use AI the most. They are the ones who use AI the most intentionally. They refuse to outsource their thinking. They know that long-term success means continuing to build human skills, not letting them atrophy.

Frequently Asked Questions About the Transformation Paradox

What is the Transformation Paradox?

The Transformation Paradox describes the gap between individual AI capability and organisational readiness. Employees are using AI to transform their work, but organisational systems (metrics, incentives, culture, management practices) continue to reinforce old ways of working. The same forces accelerating AI adoption at the individual level are holding back its institutional payoff.

How many companies are getting AI right?

According to Microsoft's 2026 Work Trend Index, only 19% of AI users work in what Microsoft calls "Frontier" conditions, where both individual capability and organisational readiness are high. The remaining 81% face some form of misalignment between what employees can do with AI and what their organisation supports.

Do organisational factors or individual skills matter more for AI impact?

Organisational factors account for 67% of reported AI impact, compared to 32% for individual factors. Culture, manager support, and talent practices matter twice as much as individual mindset and behaviour. This means investing in the right organisational environment delivers more return than hiring "AI-native" talent.

What should managers do differently with AI?

Managers should model AI use themselves, set quality standards for AI-assisted work, create psychological safety for experimentation, and encourage ambitious work redesign. Microsoft's research shows that when managers actively use AI, employees report a 30-point lift in trust in agentic AI and are 1.4x more likely to be high-frequency AI users.

Is AI actually replacing jobs in 2026?

The picture is mixed. In April 2026, 21,490 job cuts were attributed to AI (26% of monthly layoffs). Nearly 50% of Q1 2026 tech layoffs cited AI as a factor. However, the Work Trend Index also shows AI is expanding individual capability and enabling work that was not possible before. The real pattern appears to be AI-driven restructuring rather than pure replacement, with organisations that fail to redesign work suffering more displacement than those that do.